最新刊期

卷 31 , 期 2 , 2026

-

Input-output modalities in augmented reality: a two-dimensional survey of human-computer interaction AI导读

“介绍了其在增强现实领域的研究进展,相关专家构建了输入—输出双维度的AR交互技术全景框架,为解决多模态融合复杂度高、隐私安全隐患等核心挑战提供解决方案。” 摘要:Augmented reality (AR) technology has transcended the limitations of traditional 2D interactions relying on 3D spatial perception and multimodal interaction mechanisms. By seamlessly integrating digital content with the physical environment, AR systems offer users immersive experiences. In AR environments, users can navigate physical spaces while interacting with virtual objects through intuitive and natural behaviors. Such a demand has positioned AR technology at the forefront of human-computer interaction (HCI) research, attracting considerable academic and industrial attention across multiple fields. The rapid increase in consumer-grade AR devices——including Microsoft HoloLens, Meta Quest Pro, and Apple Vision Pro——has accelerated the technology’s adoption across various scenarios. This widespread deployment requires robust interaction frameworks that support AR’s unique operational demands. Different interaction modalities present diverse characteristics. For instance, head/face-based interaction is suitable for scenarios in which users’ hands are occupied, though it may lead to limited precisions. By contrast, hand-based manipulation offers greater flexibility and is good for tasks requiring precise control. Each interaction modality relies on specific hardware configurations. Applications employing bare-hand interaction must consider camera placement to ensure complete hand tracking for accurate interaction. Meanwhile, applications utilizing gaze interaction require careful consideration of eye-tracking apparatus installation. To this end, selecting appropriate interaction methods on the basis of task requirements while designing corresponding hardware support constitutes one of the key challenges hindering the widespread adoption of AR technologies. Unlike traditional computing environments, AR interactions occur in dynamic 3D spaces and engage multiple human sensory channels simultaneously. Consequently, effective AR systems must integrate diverse input modalities (e.g., hand gestures, voice commands, and eye tracking) with appropriate output feedback systems (e.g., haptic responses, spatialized audio, and olfactory cues). However, existing research landscapes reveal significant gaps in addressing these complex requirements. Current studies predominantly focus on isolated input modalities——such as gesture recognition algorithms or eye-tracking precision——or narrow application-specific implementations (e.g., museum guides or classroom applications). While these investigations provide valuable domain-specific insights, they fail to establish comprehensive frameworks that integrate multimodal information. More critically, the majority of AR HCI literature emphasizes input mechanisms while neglecting nonvisual feedback channels. This limitation results in a fragmented understanding of cross-sensory interaction paradigms, leaving critical questions regarding multimodal synchronization, sensory bandwidth constraints, and perceptual congruence. We propose a dual-dimensional analytical framework based on input and output modalities for AR interaction technologies. Through a comprehensive review of existing AR interaction paradigms——including their underlying principles, recent developments, core applications, and functional characteristics——we construct an integrated conceptual map to support future research. The input modalities are classified into speech recognition and gesture-based inputs, with the latter further subdivided into eye-, head motion-, and body movement-based interaction technologies on the basis of anatomical engagement. Output modalities are classified according to in accordance with the human sensory channels into vision, hearing, touch, smell, and taste. Our analysis reveals four fundamental challenges in contemporary AR interaction systems: 1) multimodal fusion complexity: real-time sensor fusion imposes significant computational demands. For instance, integrating high-frequency gesture tracking with voice recognition often exceeds the capabilities of current edge computing systems, resulting in perceptible visual latency. 2) Privacy-security concerns: AR devices equipped with cameras and microphones raise concerns about surveillance and data exposure. Meanwhile, neural interface technologies introduce new challenges surrounding the protection of neural data and cognitive privacy. 3) Input-output asymmetry: disproportionate development focus on input systems has created feedback channels incapable of matching the richness of user actions (e.g., advanced gesture recognition paired with rudimentary vibration feedback). 4) Interdisciplinary fragmentation: gaps between materials science (for working on flexible electronics), neuroscience (for studying multisensory perception), and computer engineering (for optimizing rendering pipelines) hinder comprehensive system-level optimization and innovation. Addressing these challenges requires deep integration across traditionally siloed disciplines. Materials science must advance the development of flexible, biocompatible sensors; cognitive neuroscience should quantify thresholds for effective multisensory integration; computer science needs to create adaptive algorithms for latency compensation; and computer ethics must establish robust frameworks for governing neural data. Only through such synergistic efforts can AR evolve beyond its current role as an interface enhancement tool into a transformative platform for human-machine cognitive collaboration. In summary, the proposed framework offers dual value to AR researchers and developers, particularly newcomers. On the one hand, it organizes fragmented technologies into a coherent structure, accelerating learning and helping readers navigate complex technical domains while identifying key research questions. On the other hand, through critical analysis of technical bottlenecks——such as cross-modal latency and limited sensory bandwidth——it highlights underexplored areas and inspires innovative solutions, avoiding redundant efforts and low-level repetition of existing work.关键词:augmented reality (AR); human-computer interaction; multi-modality; input modality; response111|184|0更新时间:2026-02-11

摘要:Augmented reality (AR) technology has transcended the limitations of traditional 2D interactions relying on 3D spatial perception and multimodal interaction mechanisms. By seamlessly integrating digital content with the physical environment, AR systems offer users immersive experiences. In AR environments, users can navigate physical spaces while interacting with virtual objects through intuitive and natural behaviors. Such a demand has positioned AR technology at the forefront of human-computer interaction (HCI) research, attracting considerable academic and industrial attention across multiple fields. The rapid increase in consumer-grade AR devices——including Microsoft HoloLens, Meta Quest Pro, and Apple Vision Pro——has accelerated the technology’s adoption across various scenarios. This widespread deployment requires robust interaction frameworks that support AR’s unique operational demands. Different interaction modalities present diverse characteristics. For instance, head/face-based interaction is suitable for scenarios in which users’ hands are occupied, though it may lead to limited precisions. By contrast, hand-based manipulation offers greater flexibility and is good for tasks requiring precise control. Each interaction modality relies on specific hardware configurations. Applications employing bare-hand interaction must consider camera placement to ensure complete hand tracking for accurate interaction. Meanwhile, applications utilizing gaze interaction require careful consideration of eye-tracking apparatus installation. To this end, selecting appropriate interaction methods on the basis of task requirements while designing corresponding hardware support constitutes one of the key challenges hindering the widespread adoption of AR technologies. Unlike traditional computing environments, AR interactions occur in dynamic 3D spaces and engage multiple human sensory channels simultaneously. Consequently, effective AR systems must integrate diverse input modalities (e.g., hand gestures, voice commands, and eye tracking) with appropriate output feedback systems (e.g., haptic responses, spatialized audio, and olfactory cues). However, existing research landscapes reveal significant gaps in addressing these complex requirements. Current studies predominantly focus on isolated input modalities——such as gesture recognition algorithms or eye-tracking precision——or narrow application-specific implementations (e.g., museum guides or classroom applications). While these investigations provide valuable domain-specific insights, they fail to establish comprehensive frameworks that integrate multimodal information. More critically, the majority of AR HCI literature emphasizes input mechanisms while neglecting nonvisual feedback channels. This limitation results in a fragmented understanding of cross-sensory interaction paradigms, leaving critical questions regarding multimodal synchronization, sensory bandwidth constraints, and perceptual congruence. We propose a dual-dimensional analytical framework based on input and output modalities for AR interaction technologies. Through a comprehensive review of existing AR interaction paradigms——including their underlying principles, recent developments, core applications, and functional characteristics——we construct an integrated conceptual map to support future research. The input modalities are classified into speech recognition and gesture-based inputs, with the latter further subdivided into eye-, head motion-, and body movement-based interaction technologies on the basis of anatomical engagement. Output modalities are classified according to in accordance with the human sensory channels into vision, hearing, touch, smell, and taste. Our analysis reveals four fundamental challenges in contemporary AR interaction systems: 1) multimodal fusion complexity: real-time sensor fusion imposes significant computational demands. For instance, integrating high-frequency gesture tracking with voice recognition often exceeds the capabilities of current edge computing systems, resulting in perceptible visual latency. 2) Privacy-security concerns: AR devices equipped with cameras and microphones raise concerns about surveillance and data exposure. Meanwhile, neural interface technologies introduce new challenges surrounding the protection of neural data and cognitive privacy. 3) Input-output asymmetry: disproportionate development focus on input systems has created feedback channels incapable of matching the richness of user actions (e.g., advanced gesture recognition paired with rudimentary vibration feedback). 4) Interdisciplinary fragmentation: gaps between materials science (for working on flexible electronics), neuroscience (for studying multisensory perception), and computer engineering (for optimizing rendering pipelines) hinder comprehensive system-level optimization and innovation. Addressing these challenges requires deep integration across traditionally siloed disciplines. Materials science must advance the development of flexible, biocompatible sensors; cognitive neuroscience should quantify thresholds for effective multisensory integration; computer science needs to create adaptive algorithms for latency compensation; and computer ethics must establish robust frameworks for governing neural data. Only through such synergistic efforts can AR evolve beyond its current role as an interface enhancement tool into a transformative platform for human-machine cognitive collaboration. In summary, the proposed framework offers dual value to AR researchers and developers, particularly newcomers. On the one hand, it organizes fragmented technologies into a coherent structure, accelerating learning and helping readers navigate complex technical domains while identifying key research questions. On the other hand, through critical analysis of technical bottlenecks——such as cross-modal latency and limited sensory bandwidth——it highlights underexplored areas and inspires innovative solutions, avoiding redundant efforts and low-level repetition of existing work.关键词:augmented reality (AR); human-computer interaction; multi-modality; input modality; response111|184|0更新时间:2026-02-11 - “本文介绍了现代制造业中缺陷检测的重要性,指出传统二维检测方法的局限性,强调了三维缺陷检测技术的优势和必要性,同时指出现有综述的不足,阐述了本文对三维缺陷检测技术进行全面回顾和探讨研究方向的意义。”

摘要:With the rapid development of modern manufacturing, consumers and producers have increasingly higher demands for product quality and safety. However, various defects such as dents, cracks, and bubbles inevitably occur during production, which may directly affect product performance. Therefore, early detection and accurate identification of defects are crucial for ensuring product quality and improving production efficiency. Traditional 2D defect detection methods only provide surface information of objects, making them inconsiderably effective in detecting deep or internal defects. Additionally, factors such as lighting, texture, and shadows can significantly influence the stability and robustness of 2D images in complex environments. In recent years, 3D defect detection technology has gradually become a research hotspot. By utilizing 3D data, such as point clouds and depth maps, it provides more comprehensive and accurate information, capturing the geometric shape, spatial distribution, and depth of objects, thereby enhancing detection accuracy and reliability. However, there is currently no systematic and comprehensive review article that tackles 3D defect detection technologies. This study aims to fill this gap by providing a thorough review of existing 3D defect detection methods and exploring future research directions. This paper first introduces the background of defect detection, highlighting the limitations of 2D defect detection technologies in detecting complex 3D objects and deep defects, and emphasizes the advantages of 3D defect detection technologies. Traditional 2D methods, which rely on image features such as texture analysis, edge detection, and morphological processing, have achieved certain success in surface defect detection. However, they struggle with complex 3D objects and internal defects because of their reliance on 2D images. By contrast, 3D defect detection technologies leverage point clouds and depth maps to provide a more comprehensive analysis of surface and internal structures, making them more suitable for modern industrial applications. Subsequently, this paper systematically summarizes the current research status of 3D defect detection from two perspectives: traditional and deep learning. In traditional methods, 3D defect detection is mainly divided into three categories: methods based on local features in point clouds, methods based on point cloud registration, and methods based on point cloud segmentation. Methods based on local features in point clouds detect defects by extracting local features such as depth, area, and slope, combined with threshold settings. These methods exhibit robustness and computational efficiency but lack utilization of global contextual information. For example, they can effectively detect common industrial defects like dents and protrusions but may miss defects that require a global understanding of the object’s structure. Methods based on point cloud registration align target point clouds with model data and identify defects by comparing differences. Their performance depends on the accuracy and efficiency of the registration algorithm. While these methods excel in utilizing global information and shape consistency, they face challenges in computational complexity and error accumulation. Methods based on point cloud segmentation detect defects by segmenting point clouds and extracting regional features. They excel in local feature extraction and fine-grained localization but may lose global information. These methods are computationally efficient in the preprocessing stage but may suffer from segmentation errors and parameter sensitivity. In deep learning methods, 3D defect detection is divided into point cloud-based methods and multimodal fusion-based methods. Point cloud-based methods automatically learn point cloud features through neural networks, making them suitable for complex surfaces. However, they involve intricate data processing and require significant computational resources. For instance, these methods can handle irregular shapes and textures but may struggle with real-time applications owing to their computational demands. Multimodal fusion-based methods combine information from multiple modalities such as RGB images and point cloud data, significantly improving detection accuracy and robustness. Specific methods include teacher-student network-based methods, memory bank-based methods, reconstruction-based methods, and methods leveraging contrastive language-image pretraining (CLIP). Teacher-student network-based methods achieve efficient feature learning through knowledge distillation or reverse distillation, offering advantages such as model lightweighting and high detection sensitivity. However, their performance heavily depends on the quality of the teacher network and the precision of feature matching. Memory bank-based methods enable rapid detection by storing multimodal features but incur high storage costs. Reconstruction-based methods analyze defect locations through reconstruction errors but exhibit low sensitivity to minor defects. These methods leverage the spatial and texture features of 3D data but require complex model training and high-quality reconstruction networks. CLIP-based methods leverage cross-modal characteristics for zero-shot detection, offering strong adaptability but requiring high computational costs. They are promising for their ability to generalize across different defect types but may need task-specific optimizations to improve performance. Moreover, this paper summarizes commonly used public datasets in the field of 3D defect detection, including the MVTec 3D Anomaly Detection(MVTec 3D-AD), Eyecandies, Real 3D-AD, Play-Doh made (PD-REAL), Anomaly ShapeNet, and Multi-pose Anomaly Detection datasets. These datasets provide various samples and annotations, enabling researchers to train, validate, and evaluate their methods effectively. This paper also introduces evaluation metrics such as the image-level area under the receiver operating characteristic curve (I-AUROC), pixel-level area under the receiver operating characteristic curve (P-AUROC), and area under the precision-recall operating curve (AUPRO), along with their definitions and calculation methods. I-AUROC measures a model’s ability to distinguish between normal and defective images at the image level, while P-AUROC evaluates pixel-level defect localization. AUPRO focuses on the overlap between predicted and actual defect regions, providing a more detailed assessment of detection performance. Finally, this study analyzes the current challenges in 3D defect detection, including data quality, large-scale data processing, real-time performance, and the integration of domain knowledge with algorithms. Data quality remains a critical issue, given that noisy or incomplete data can significantly affect detection accuracy. Large-scale data processing is another challenge, especially in industrial applications where real-time performance is essential. The integration of domain knowledge with advanced algorithms can enhance detection performance but requires interdisciplinary collaboration. This study further explores future research directions, such as cross-modal information fusion, the application of augmented reality and virtual reality technologies, and the optimization of real-time performance and efficiency. Cross-modal fusion can improve detection accuracy by combining complementary information from different data sources. Augmented reality and virtual reality technologies can enhance defect visualization and interaction, providing intuitive solutions for industrial applications. Real-time performance optimization is crucial for deploying 3D defect detection systems in production lines, where efficiency and speed are paramount. In conclusion, 3D defect detection is a critical and challenging task with broad applications in various fields. This paper provides a comprehensive review of traditional and deep learning methods in 3D defect detection, summarizes existing datasets and evaluation metrics, and discusses current challenges and future directions. By addressing these challenges and exploring new research avenues, this study aims to inspire further advancements in 3D defect detection technologies, ultimately contributing to improved product quality and manufacturing efficiency.关键词:defect detection;computer vision;3D vision;deep learning;multimodal;review276|425|0更新时间:2026-02-11

摘要:With the rapid development of modern manufacturing, consumers and producers have increasingly higher demands for product quality and safety. However, various defects such as dents, cracks, and bubbles inevitably occur during production, which may directly affect product performance. Therefore, early detection and accurate identification of defects are crucial for ensuring product quality and improving production efficiency. Traditional 2D defect detection methods only provide surface information of objects, making them inconsiderably effective in detecting deep or internal defects. Additionally, factors such as lighting, texture, and shadows can significantly influence the stability and robustness of 2D images in complex environments. In recent years, 3D defect detection technology has gradually become a research hotspot. By utilizing 3D data, such as point clouds and depth maps, it provides more comprehensive and accurate information, capturing the geometric shape, spatial distribution, and depth of objects, thereby enhancing detection accuracy and reliability. However, there is currently no systematic and comprehensive review article that tackles 3D defect detection technologies. This study aims to fill this gap by providing a thorough review of existing 3D defect detection methods and exploring future research directions. This paper first introduces the background of defect detection, highlighting the limitations of 2D defect detection technologies in detecting complex 3D objects and deep defects, and emphasizes the advantages of 3D defect detection technologies. Traditional 2D methods, which rely on image features such as texture analysis, edge detection, and morphological processing, have achieved certain success in surface defect detection. However, they struggle with complex 3D objects and internal defects because of their reliance on 2D images. By contrast, 3D defect detection technologies leverage point clouds and depth maps to provide a more comprehensive analysis of surface and internal structures, making them more suitable for modern industrial applications. Subsequently, this paper systematically summarizes the current research status of 3D defect detection from two perspectives: traditional and deep learning. In traditional methods, 3D defect detection is mainly divided into three categories: methods based on local features in point clouds, methods based on point cloud registration, and methods based on point cloud segmentation. Methods based on local features in point clouds detect defects by extracting local features such as depth, area, and slope, combined with threshold settings. These methods exhibit robustness and computational efficiency but lack utilization of global contextual information. For example, they can effectively detect common industrial defects like dents and protrusions but may miss defects that require a global understanding of the object’s structure. Methods based on point cloud registration align target point clouds with model data and identify defects by comparing differences. Their performance depends on the accuracy and efficiency of the registration algorithm. While these methods excel in utilizing global information and shape consistency, they face challenges in computational complexity and error accumulation. Methods based on point cloud segmentation detect defects by segmenting point clouds and extracting regional features. They excel in local feature extraction and fine-grained localization but may lose global information. These methods are computationally efficient in the preprocessing stage but may suffer from segmentation errors and parameter sensitivity. In deep learning methods, 3D defect detection is divided into point cloud-based methods and multimodal fusion-based methods. Point cloud-based methods automatically learn point cloud features through neural networks, making them suitable for complex surfaces. However, they involve intricate data processing and require significant computational resources. For instance, these methods can handle irregular shapes and textures but may struggle with real-time applications owing to their computational demands. Multimodal fusion-based methods combine information from multiple modalities such as RGB images and point cloud data, significantly improving detection accuracy and robustness. Specific methods include teacher-student network-based methods, memory bank-based methods, reconstruction-based methods, and methods leveraging contrastive language-image pretraining (CLIP). Teacher-student network-based methods achieve efficient feature learning through knowledge distillation or reverse distillation, offering advantages such as model lightweighting and high detection sensitivity. However, their performance heavily depends on the quality of the teacher network and the precision of feature matching. Memory bank-based methods enable rapid detection by storing multimodal features but incur high storage costs. Reconstruction-based methods analyze defect locations through reconstruction errors but exhibit low sensitivity to minor defects. These methods leverage the spatial and texture features of 3D data but require complex model training and high-quality reconstruction networks. CLIP-based methods leverage cross-modal characteristics for zero-shot detection, offering strong adaptability but requiring high computational costs. They are promising for their ability to generalize across different defect types but may need task-specific optimizations to improve performance. Moreover, this paper summarizes commonly used public datasets in the field of 3D defect detection, including the MVTec 3D Anomaly Detection(MVTec 3D-AD), Eyecandies, Real 3D-AD, Play-Doh made (PD-REAL), Anomaly ShapeNet, and Multi-pose Anomaly Detection datasets. These datasets provide various samples and annotations, enabling researchers to train, validate, and evaluate their methods effectively. This paper also introduces evaluation metrics such as the image-level area under the receiver operating characteristic curve (I-AUROC), pixel-level area under the receiver operating characteristic curve (P-AUROC), and area under the precision-recall operating curve (AUPRO), along with their definitions and calculation methods. I-AUROC measures a model’s ability to distinguish between normal and defective images at the image level, while P-AUROC evaluates pixel-level defect localization. AUPRO focuses on the overlap between predicted and actual defect regions, providing a more detailed assessment of detection performance. Finally, this study analyzes the current challenges in 3D defect detection, including data quality, large-scale data processing, real-time performance, and the integration of domain knowledge with algorithms. Data quality remains a critical issue, given that noisy or incomplete data can significantly affect detection accuracy. Large-scale data processing is another challenge, especially in industrial applications where real-time performance is essential. The integration of domain knowledge with advanced algorithms can enhance detection performance but requires interdisciplinary collaboration. This study further explores future research directions, such as cross-modal information fusion, the application of augmented reality and virtual reality technologies, and the optimization of real-time performance and efficiency. Cross-modal fusion can improve detection accuracy by combining complementary information from different data sources. Augmented reality and virtual reality technologies can enhance defect visualization and interaction, providing intuitive solutions for industrial applications. Real-time performance optimization is crucial for deploying 3D defect detection systems in production lines, where efficiency and speed are paramount. In conclusion, 3D defect detection is a critical and challenging task with broad applications in various fields. This paper provides a comprehensive review of traditional and deep learning methods in 3D defect detection, summarizes existing datasets and evaluation metrics, and discusses current challenges and future directions. By addressing these challenges and exploring new research avenues, this study aims to inspire further advancements in 3D defect detection technologies, ultimately contributing to improved product quality and manufacturing efficiency.关键词:defect detection;computer vision;3D vision;deep learning;multimodal;review276|425|0更新时间:2026-02-11 - “介绍了超声舌成像在语言学等领域的研究进展,专家借助深度学习攻克了超声舌成像处理难题,为语音工程应用等开辟了新方向。”

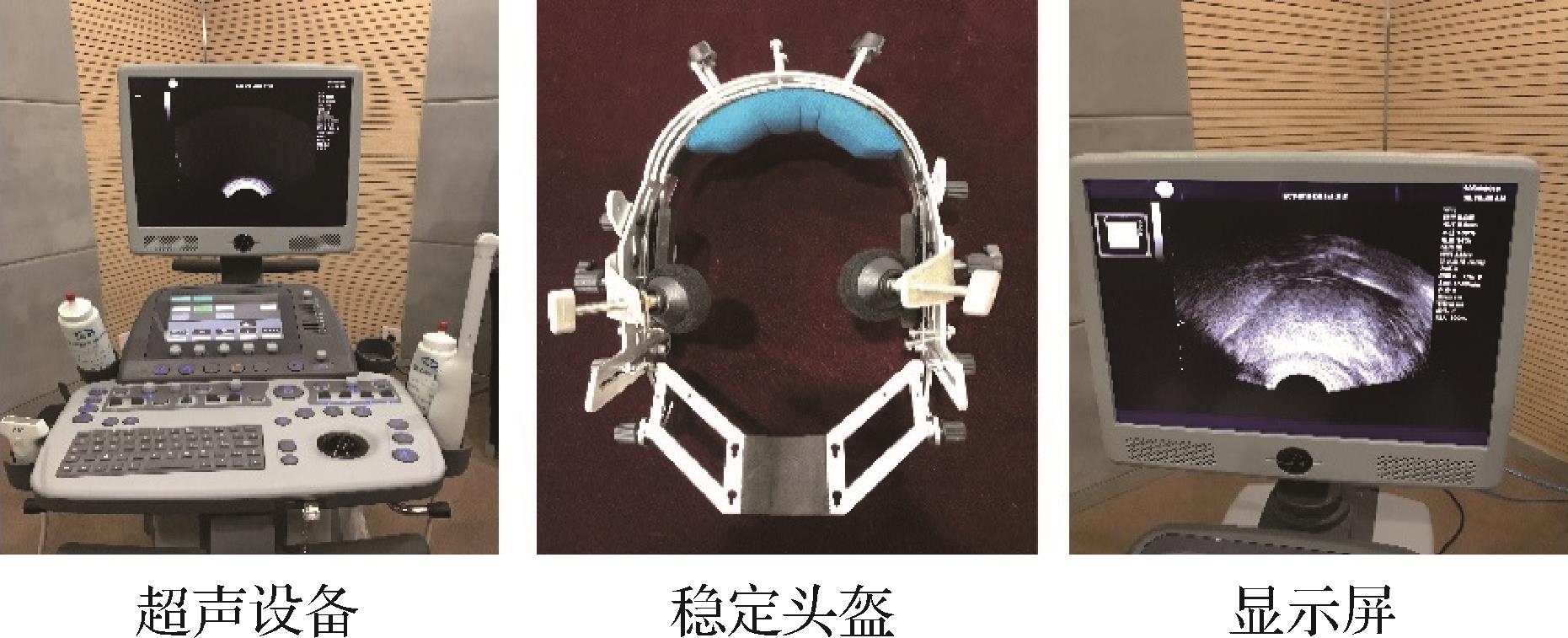

摘要:Ultrasound imaging devices enable dynamic visualization and recording of articulatory physiology, specifically the tongue, during speech production. The processing and analysis of ultrasound tongue imaging (UTI) data hold significant importance for practical linguistics, experimental phonetic research, and speech engineering applications. Within these domains, the dynamic analysis of tongue movement trajectories and the quantitative analysis of lingual posture based on tongue contour extraction constitute critical technical challenges in practical implementation. However, UTI analysis faces substantial difficulties due to inherent noise characteristics, such as image blurring and indistinct tongue boundaries. These challenges manifest primarily as obstacles in accurately extracting key lingual positional features and reliably tracking dynamic tongue contours throughout speech sequences. Deep learning (DL) techniques, leveraging their powerful capacity for hierarchical feature learning and representation, have demonstrated considerable success in advancing UTI processing and analysis. Significant progress has been achieved in several core areas, including the automated extraction of tongue contours from noisy ultrasound frames, the construction of sophisticated models representing complex tongue motion dynamics, and the facilitation of deeper integration into advanced speech engineering applications. This comprehensive overview details the fundamental principles of medical ultrasound imaging and its specialized adaptation for lingual visualization (i.e., UTI). It systematically examines the persistent challenges encountered in UTI data processing. Furthermore, it concisely outlines the methodological foundations of DL, encompassing key architectures like convolutional neural networks, recurrent neural networks, and their variants (e.g., long short-term memory networks), which are particularly suited for spatial and spatiotemporal data analyses. The core of this review is that it synthesizes and critically evaluates the modeling mechanisms and specific applications of DL techniques across various tasks within UTI processing and analysis. Key application areas include, but are not limited to the following: ultrasound-video synchronization: precise temporal alignment of ultrasound image sequences with concurrently recorded video (e.g., lip movement) and acoustic signals for multimodal analysis. Biometric identification: exploiting unique individual tongue movement patterns derived from UTI for speaker recognition or verification systems. Multimodal learning: integrating UTI data with complementary modalities such as audio signals, electromagnetic articulography, or visual speech information (video) to build robust and comprehensive models of speech production using fusion techniques. Silent speech interfaces (SSIs): enabling speech recognition or synthesis solely from articulatory movements captured by UTI, which is particularly valuable in noisy environments or for individuals with voice impairments. Acoustic-articulatory inversion modeling: predicting the underlying articulatory configurations (represented by UTI data) from the acoustic speech signal or synthesizing speech from articulatory data. Language learning and speech rehabilitation: providing visual biofeedback of tongue positioning and movement to second language learners aiming to master new phonemes or to individuals undergoing therapy for speech sound disorders (e.g., apraxia and dysarthria). Innovative interaction and artistic expression: exploring novel human-computer interaction paradigms and artistic performances utilizing real-time tongue movement tracking. For each application domain, this review systematically delineates the underlying theoretical frameworks, specific technical methodologies employed (detailing network architectures, input representations, loss functions, and training protocols), and established performance evaluation metrics used to assess the efficacy of the proposed DL solutions. Common metrics include contour extraction accuracy (e.g., Dice coefficient and Hausdorff distance), tracking error, recognition accuracy, synthesis quality (e.g., Mel cepstral distortion), and inference speed. Finally, this review critically examines the current state of the field and outlines compelling research prospects and future trends for DL in UTI processing and analysis. These aspects include the following: development of more efficient and lightweight models: enabling real-time processing on mobile or embedded devices for practical applications like wearable SSI or biofeedback tools. Enhanced robustness and generalization: creating models that perform reliably across diverse speakers, accents, imaging devices, and recording conditions, potentially through advanced domain adaptation, data augmentation, or self-supervised/semisupervised learning paradigms. Explainable artificial intelligence (AI) for UTI: moving beyond black-box predictions to develop interpretable DL models that provide insights into how articulatory features are learned and utilized, fostering trust and deep linguistic understanding. Integration with advanced speech synthesis (vocoding): combining high-quality articulatory-to-acoustic inversion models with state-of-the-art neural vocoders for natural-sounding speech synthesis from UTI. Large-scale, multispeaker UTI datasets: facilitating the training of generalizable and powerful models through collaborative efforts to create and share comprehensive, annotated datasets. Exploration of self-supervised and unsupervised learning: reducing the heavy reliance on manually annotated UTI data by leveraging the inherent structure within unlabeled ultrasound sequences. Cross-lingual and low-resource adaptation: developing techniques to effectively apply models trained on data-rich languages to under-resourced languages with limited UTI data. Combining UTI with other biosignals: investigating fusion with neural signals (e.g., EEG) or other physiological data for next-generation brain-computer interfaces or advanced speech rehabilitation monitoring. Future progress hinges on sustained interdisciplinary collaboration between linguists, speech scientists, computer vision researchers, machine learning experts, and engineers. Beyond technical advancements, the ethical deployment and accessibility of UTI-DL systems warrant significant attention. Ensuring data privacy in biometric applications, addressing algorithmic bias across diverse populations, and developing cost-effective solutions for clinical and educational settings are crucial for responsible innovation. Furthermore, establishing standardized evaluation benchmarks and open-source frameworks will accelerate reproducibility and community-driven progress. The continued integration and innovation in computer science, particularly in AI and machine learning, alongside advancements in ultrasound imaging technology, are anticipated to significantly propel scientific research and disciplinary development within experimental phonetics and linguistics. DL stands as a pivotal catalyst, unlocking the full potential of UTI as a powerful tool for understanding speech production, developing novel technologies, and enhancing human communication and well-being.关键词:deep learning(DL);ultrasonic tongue imaging(UTI);articulatory physiological tongue body;speech engineering;speech recognition;speech synthesis82|99|0更新时间:2026-02-11

摘要:Ultrasound imaging devices enable dynamic visualization and recording of articulatory physiology, specifically the tongue, during speech production. The processing and analysis of ultrasound tongue imaging (UTI) data hold significant importance for practical linguistics, experimental phonetic research, and speech engineering applications. Within these domains, the dynamic analysis of tongue movement trajectories and the quantitative analysis of lingual posture based on tongue contour extraction constitute critical technical challenges in practical implementation. However, UTI analysis faces substantial difficulties due to inherent noise characteristics, such as image blurring and indistinct tongue boundaries. These challenges manifest primarily as obstacles in accurately extracting key lingual positional features and reliably tracking dynamic tongue contours throughout speech sequences. Deep learning (DL) techniques, leveraging their powerful capacity for hierarchical feature learning and representation, have demonstrated considerable success in advancing UTI processing and analysis. Significant progress has been achieved in several core areas, including the automated extraction of tongue contours from noisy ultrasound frames, the construction of sophisticated models representing complex tongue motion dynamics, and the facilitation of deeper integration into advanced speech engineering applications. This comprehensive overview details the fundamental principles of medical ultrasound imaging and its specialized adaptation for lingual visualization (i.e., UTI). It systematically examines the persistent challenges encountered in UTI data processing. Furthermore, it concisely outlines the methodological foundations of DL, encompassing key architectures like convolutional neural networks, recurrent neural networks, and their variants (e.g., long short-term memory networks), which are particularly suited for spatial and spatiotemporal data analyses. The core of this review is that it synthesizes and critically evaluates the modeling mechanisms and specific applications of DL techniques across various tasks within UTI processing and analysis. Key application areas include, but are not limited to the following: ultrasound-video synchronization: precise temporal alignment of ultrasound image sequences with concurrently recorded video (e.g., lip movement) and acoustic signals for multimodal analysis. Biometric identification: exploiting unique individual tongue movement patterns derived from UTI for speaker recognition or verification systems. Multimodal learning: integrating UTI data with complementary modalities such as audio signals, electromagnetic articulography, or visual speech information (video) to build robust and comprehensive models of speech production using fusion techniques. Silent speech interfaces (SSIs): enabling speech recognition or synthesis solely from articulatory movements captured by UTI, which is particularly valuable in noisy environments or for individuals with voice impairments. Acoustic-articulatory inversion modeling: predicting the underlying articulatory configurations (represented by UTI data) from the acoustic speech signal or synthesizing speech from articulatory data. Language learning and speech rehabilitation: providing visual biofeedback of tongue positioning and movement to second language learners aiming to master new phonemes or to individuals undergoing therapy for speech sound disorders (e.g., apraxia and dysarthria). Innovative interaction and artistic expression: exploring novel human-computer interaction paradigms and artistic performances utilizing real-time tongue movement tracking. For each application domain, this review systematically delineates the underlying theoretical frameworks, specific technical methodologies employed (detailing network architectures, input representations, loss functions, and training protocols), and established performance evaluation metrics used to assess the efficacy of the proposed DL solutions. Common metrics include contour extraction accuracy (e.g., Dice coefficient and Hausdorff distance), tracking error, recognition accuracy, synthesis quality (e.g., Mel cepstral distortion), and inference speed. Finally, this review critically examines the current state of the field and outlines compelling research prospects and future trends for DL in UTI processing and analysis. These aspects include the following: development of more efficient and lightweight models: enabling real-time processing on mobile or embedded devices for practical applications like wearable SSI or biofeedback tools. Enhanced robustness and generalization: creating models that perform reliably across diverse speakers, accents, imaging devices, and recording conditions, potentially through advanced domain adaptation, data augmentation, or self-supervised/semisupervised learning paradigms. Explainable artificial intelligence (AI) for UTI: moving beyond black-box predictions to develop interpretable DL models that provide insights into how articulatory features are learned and utilized, fostering trust and deep linguistic understanding. Integration with advanced speech synthesis (vocoding): combining high-quality articulatory-to-acoustic inversion models with state-of-the-art neural vocoders for natural-sounding speech synthesis from UTI. Large-scale, multispeaker UTI datasets: facilitating the training of generalizable and powerful models through collaborative efforts to create and share comprehensive, annotated datasets. Exploration of self-supervised and unsupervised learning: reducing the heavy reliance on manually annotated UTI data by leveraging the inherent structure within unlabeled ultrasound sequences. Cross-lingual and low-resource adaptation: developing techniques to effectively apply models trained on data-rich languages to under-resourced languages with limited UTI data. Combining UTI with other biosignals: investigating fusion with neural signals (e.g., EEG) or other physiological data for next-generation brain-computer interfaces or advanced speech rehabilitation monitoring. Future progress hinges on sustained interdisciplinary collaboration between linguists, speech scientists, computer vision researchers, machine learning experts, and engineers. Beyond technical advancements, the ethical deployment and accessibility of UTI-DL systems warrant significant attention. Ensuring data privacy in biometric applications, addressing algorithmic bias across diverse populations, and developing cost-effective solutions for clinical and educational settings are crucial for responsible innovation. Furthermore, establishing standardized evaluation benchmarks and open-source frameworks will accelerate reproducibility and community-driven progress. The continued integration and innovation in computer science, particularly in AI and machine learning, alongside advancements in ultrasound imaging technology, are anticipated to significantly propel scientific research and disciplinary development within experimental phonetics and linguistics. DL stands as a pivotal catalyst, unlocking the full potential of UTI as a powerful tool for understanding speech production, developing novel technologies, and enhancing human communication and well-being.关键词:deep learning(DL);ultrasonic tongue imaging(UTI);articulatory physiological tongue body;speech engineering;speech recognition;speech synthesis82|99|0更新时间:2026-02-11

Review

- “介绍了其在军事目标检测领域的研究进展,相关专家构建了无人机视角坦克目标检测仿真数据集,验证了仿真数据在缓解实战图像数据稀缺问题上的有效性,为武器平台的实战运用提供了支持。”

摘要:ObjectiveThe continuous evolution of computer simulation technology has made synthetic data an effective solution for data-scarce domains. However, in military target detection—where battlefield samples are exceptionally sparse—the cross-domain generalization of models trained on synthetic data remains empirically unverified. This research aims to explore the mechanisms underlying the cross-domain generalization of synthetic data, with a particular emphasis on tank target detection from unmanned aerial vehicle (UAV) perspectives. A key contribution of this study is the development of the synthetic tank (ST) 1.0 dataset, which comprises three distinct subsets (ST1, ST2, and ST3), each containing 1 600 images. These subsets are differentiated by their data sources and exhibit varying levels of simulation precision and scenario realism. This tiered design enables the deconstruction of the “domain gap” to quantify the effect of different types of synthetic data on model generalization. Ultimately, this research seeks to provide quantitative and qualitative insights into how synthetic data can be leveraged to train object detectors for practical deployment in modern weapon systems.MethodTo rigorously evaluate the cross-domain generalization of models trained on synthetic data, this study proposes a multilayered validation framework and a structured experimental design. The three-level validation framework systematically assesses performance across domains: 1) synthetic domain: models are tested on reserved synthetic subsets (e.g., models trained on ST1 are evaluated on the ST1 test set), establishing a performance benchmark in a controlled “ideal” setting to quantify basic synthetic-domain capabilities. 2) Real domain: performance is evaluated using public real-world military tank datasets comprising 41 images, with 11 sourced from ImageNet and 30 from Open Images V7. This step quantifies the initial synthetic-to-real domain discrepancy, elucidating the challenges in transitioning from controlled synthetic environments to real-world scenarios. 3) Combat domain: models are evaluated on a proprietary dataset consisting of UAV-acquired imagery collected from combat areas. This dataset contains 1 000 images depicting complex combat scenarios and is designed to serve as a rigorous benchmark for assessing model generalization and battlefield adaptability. To mitigate architecture-specific biases, we evaluate three representative heterogeneous object detectors: cascade R-CNN is a two-stage anchor-based model that enhances proposal accuracy through cascaded regression and classification. YOLOv10 is a single-stage detector optimized for latency-sensitive applications while maintaining competitive precision. RT-DETR is a real-time Transformer-based architecture that leverages global self-attention to model long-range dependencies. Furthermore, we incorporate a contemporary state-of-the-art detector, YOLOv12, as an additional performance benchmark. The experimental design is structured around three research inquiries: 1) synthetic domain discrepancy: this inquiry analyzes how model performance varies when trained on ST1, ST2, or ST3 and evaluated using the three-level validation framework, directly assessing the influence of different synthetic domains on generalization. 2) Mixed synthetic strategies: this aspect investigates the effects of training on mixed datasets, aiming to determine whether combining samples from multiple synthetic domains can enhance model robustness and generalization capabilities. 3) Data augmentation efficacy: this inquiry examines the effectiveness of using data from one synthetic domain to augment training on another. This approach systematically assesses whether cross-domain data augmentation can further improve generalization and mitigate domain-specific biases.ResultExperimental investigations provided insights into the intricate relationship between synthetic data and real-world model performance. First, across all evaluated architectures, model performance—as measured by average precision —declined significantly when moving from controlled synthetic environments to real-world scenarios and further to the highly unpredictable combat domain. This trend clearly highlights the pronounced effect of the domain gap. Second, among the three detectors, RT-DETR consistently exhibited greater cross-domain robustness, with performance degrading more gradually in real-world and combat domains in comparison with the other detectors. Third, the generalization capability of models can be substantially enhanced through the incorporation of high-quality synthetic data. Such data are characterized by three critical attributes: photorealistic fidelity, adaptability to target-domain attributes, and close alignment with the feature distribution of real-world data. These qualities collectively ensure that synthetic data not only visually resemble real data but also effectively capture the underlying characteristics and variability of the target domain, thereby facilitating improved model robustness and transferability across diverse operational scenarios. Fourth, model performance can be further improved by incorporating high-quality synthetic data through data mixing or augmentation strategies. These methods effectively reduce overfitting on dataset-specific characteristics by introducing data distributions that closely mirror those of the target domain.ConclusionResearch has demonstrated that synthetic data can partially alleviate the scarcity of real-world data in the military domain, providing critical support for the practical deployment of weapon systems. However, synthetic data continue to exhibit inherent limitations in cross-domain generalization. Future research should prioritize the collaborative construction of multisource heterogeneous datasets and the deep integration of domain generalization techniques while broadening the research scope to encompass a wider array of military target categories and model architectures. Furthermore, investigating the security boundaries of intelligent data fusion in decision-making processes is essential to establish more scientific and reliable methodologies for constructing military object detection datasets.关键词:military tank detection;unmanned aerical vehicle(UAV);synthetic data;single-source domain generalization;operational application355|219|0更新时间:2026-02-11

摘要:ObjectiveThe continuous evolution of computer simulation technology has made synthetic data an effective solution for data-scarce domains. However, in military target detection—where battlefield samples are exceptionally sparse—the cross-domain generalization of models trained on synthetic data remains empirically unverified. This research aims to explore the mechanisms underlying the cross-domain generalization of synthetic data, with a particular emphasis on tank target detection from unmanned aerial vehicle (UAV) perspectives. A key contribution of this study is the development of the synthetic tank (ST) 1.0 dataset, which comprises three distinct subsets (ST1, ST2, and ST3), each containing 1 600 images. These subsets are differentiated by their data sources and exhibit varying levels of simulation precision and scenario realism. This tiered design enables the deconstruction of the “domain gap” to quantify the effect of different types of synthetic data on model generalization. Ultimately, this research seeks to provide quantitative and qualitative insights into how synthetic data can be leveraged to train object detectors for practical deployment in modern weapon systems.MethodTo rigorously evaluate the cross-domain generalization of models trained on synthetic data, this study proposes a multilayered validation framework and a structured experimental design. The three-level validation framework systematically assesses performance across domains: 1) synthetic domain: models are tested on reserved synthetic subsets (e.g., models trained on ST1 are evaluated on the ST1 test set), establishing a performance benchmark in a controlled “ideal” setting to quantify basic synthetic-domain capabilities. 2) Real domain: performance is evaluated using public real-world military tank datasets comprising 41 images, with 11 sourced from ImageNet and 30 from Open Images V7. This step quantifies the initial synthetic-to-real domain discrepancy, elucidating the challenges in transitioning from controlled synthetic environments to real-world scenarios. 3) Combat domain: models are evaluated on a proprietary dataset consisting of UAV-acquired imagery collected from combat areas. This dataset contains 1 000 images depicting complex combat scenarios and is designed to serve as a rigorous benchmark for assessing model generalization and battlefield adaptability. To mitigate architecture-specific biases, we evaluate three representative heterogeneous object detectors: cascade R-CNN is a two-stage anchor-based model that enhances proposal accuracy through cascaded regression and classification. YOLOv10 is a single-stage detector optimized for latency-sensitive applications while maintaining competitive precision. RT-DETR is a real-time Transformer-based architecture that leverages global self-attention to model long-range dependencies. Furthermore, we incorporate a contemporary state-of-the-art detector, YOLOv12, as an additional performance benchmark. The experimental design is structured around three research inquiries: 1) synthetic domain discrepancy: this inquiry analyzes how model performance varies when trained on ST1, ST2, or ST3 and evaluated using the three-level validation framework, directly assessing the influence of different synthetic domains on generalization. 2) Mixed synthetic strategies: this aspect investigates the effects of training on mixed datasets, aiming to determine whether combining samples from multiple synthetic domains can enhance model robustness and generalization capabilities. 3) Data augmentation efficacy: this inquiry examines the effectiveness of using data from one synthetic domain to augment training on another. This approach systematically assesses whether cross-domain data augmentation can further improve generalization and mitigate domain-specific biases.ResultExperimental investigations provided insights into the intricate relationship between synthetic data and real-world model performance. First, across all evaluated architectures, model performance—as measured by average precision —declined significantly when moving from controlled synthetic environments to real-world scenarios and further to the highly unpredictable combat domain. This trend clearly highlights the pronounced effect of the domain gap. Second, among the three detectors, RT-DETR consistently exhibited greater cross-domain robustness, with performance degrading more gradually in real-world and combat domains in comparison with the other detectors. Third, the generalization capability of models can be substantially enhanced through the incorporation of high-quality synthetic data. Such data are characterized by three critical attributes: photorealistic fidelity, adaptability to target-domain attributes, and close alignment with the feature distribution of real-world data. These qualities collectively ensure that synthetic data not only visually resemble real data but also effectively capture the underlying characteristics and variability of the target domain, thereby facilitating improved model robustness and transferability across diverse operational scenarios. Fourth, model performance can be further improved by incorporating high-quality synthetic data through data mixing or augmentation strategies. These methods effectively reduce overfitting on dataset-specific characteristics by introducing data distributions that closely mirror those of the target domain.ConclusionResearch has demonstrated that synthetic data can partially alleviate the scarcity of real-world data in the military domain, providing critical support for the practical deployment of weapon systems. However, synthetic data continue to exhibit inherent limitations in cross-domain generalization. Future research should prioritize the collaborative construction of multisource heterogeneous datasets and the deep integration of domain generalization techniques while broadening the research scope to encompass a wider array of military target categories and model architectures. Furthermore, investigating the security boundaries of intelligent data fusion in decision-making processes is essential to establish more scientific and reliable methodologies for constructing military object detection datasets.关键词:military tank detection;unmanned aerical vehicle(UAV);synthetic data;single-source domain generalization;operational application355|219|0更新时间:2026-02-11

Dataset

- “相关研究在低光图像增强领域取得新进展,研究人员提出了一种融合多重空洞卷积与坐标分组的暗光图像增强网络(MCCNet),为解决低光环境下拍摄图像存在的亮度不均、细节丢失和色彩失真等问题提供了有效方案。”

摘要:ObjectiveImages captured under low-light conditions often suffer from various visual quality degradation issues, including uneven illumination, significant loss of structural and textural details, and severe color distortions. These issues not only compromise human visual perception but also pose serious challenges for high-level vision tasks such as object detection and recognition. While numerous low-light image enhancement (LLIE) techniques have been developed to address these problems, most existing methods primarily concentrate on improving brightness or contrast. As a result, they often overlook the critical aspect of accurate color restoration, leading to undesired artifacts such as color casts, overenhancement, or structural inconsistencies. Conventional approaches, such as Retinex-based models, enhance image brightness by decomposing images into reflectance and illumination components. However, this strategy tends to produce color distortions, particularly in extremely low-light scenarios in which the lack of information causes the reflectance component to be inaccurately estimated. Deep learning-based methods have emerged as a promising alternative given their ability to learn complex mappings between low- and normal-light domains. Nonetheless, many of these models are characterized by large network sizes, high computational costs, and suboptimal generalization to diverse illumination conditions, making them minimally suitable for practical deployment on resource-constrained platforms.MethodTo overcome the aforementioned challenges, we propose a novel LLIE framework named multiscale dilated convolution with coordinate grouping enhancement network (MCCNet). MCCNet is designed with a clear emphasis on brightness restoration and color correction, ensuring visually pleasing and natural-looking outputs. The architecture comprises two independently designed yet interactively trained branches: a color conversion branch and a detail enhancement branch. The color conversion branch operates in a hue-value-intensity (HUI) color space, which is more perceptually aligned with human vision than traditional RGB representations. We introduce a color adaptation factor that is dynamically learned on the basis of pixel-wise weight perception to effectively decouple and modulate the color and brightness components of images. This decoupling enables targeted enhancement of color fidelity without introducing additional artifacts or distortion. In the enhancement branch, we design a global grouped coordinate attention (GGCA) module to improve feature expressiveness and encourage effective cross-branch information exchange. GGCA selectively emphasizes meaningful features while suppressing irrelevant or noisy signals, which is especially beneficial for images with high levels of darkness and low signal-to-noise ratios. Furthermore, we propose a multiscale dilated fusion attention (MDFA) module that aggregates features across multiple dilation rates. This module enables the network to capture global context and fine-grained local structure, leading to precise brightness recovery and edge preservation. By fusing multiscale features, MDFA mitigates the typical blurring and oversmoothing problems that occur in conventional convolutional enhancement networks. The two branches of MCCNet work collaboratively to generate high-quality enhanced images. The color conversion branch ensures accurate chromatic consistency, while the enhancement branch restores luminance and structure details. This dual-branch strategy effectively addresses the common trade-off between brightness enhancement and color fidelity that limits many existing LLIE methods.ResultTo thoroughly evaluate the performance of the proposed MCCNet, we conduct comprehensive experiments on multiple public benchmarks, including LOLv1, LOLv2, and five unpaired real-world low-light datasets. In addition, we test the model on two extremely low-light subsets to assess its robustness under severe lighting degradation. MCCNet is compared against 15 state-of-the-art LLIE methods, including traditional models like RetinexNet and zero-reference deep curve estimation(Zero-DCE), as well as recent deep learning approaches such as enlightening generative adversarial networks for low-light image enhancement(EnlightGAN), unsupervised retinex network(URetinex-Net), generative diffusion prior for unified image restoration and enhancement(GDP), lightening diffusion for low-light image enhancement(LightenDiffusion), and CIDNet. Quantitative results demonstrate that MCCNet achieves superior performance in terms of peak signal-to-noise ratio (PSNR) and structural similarity index (SSIM). Specifically, MCCNet improves PSNR by 1.57 dB compared with the best-performing baseline on the LOLv1 dataset. Our method also achieves the highest SSIM score, indicating better structural preservation and perceptual quality. Qualitative comparisons reveal that MCCNet produces images with more natural lighting, reduced noise, and vivid, realistic colors, even in complex scenes with significant shadows or saturation challenges. In terms of computational complexity, MCCNet contains only 1.98 million parameters, and FLOPs are limited to 8.06 G. This dramatic reduction in model size and computational load makes MCCNet highly suitable for deployment on edge devices, mobile platforms, or real-time applications where efficiency is critical.ConclusionThe proposed color space-based MCCNet effectively restores brightness and color, addresses color cast and artifacts, and excels at lighting adjustment and noise suppression, achieving high-quality visual enhancement. Moreover, the method fully considers the practical demands of computational complexity and model size. The MCCNet model contains only 1.98 million parameters and requires 8.06 G FLOPs. While achieving state-of-the-art performance, it significantly reduces model size and computational cost. In particular, compared with classic image enhancement methods and recent advanced algorithms, MCCNet reduces model parameters and computational load by approximately 96% and 46% on average, respectively, demonstrating an efficient and lightweight design.关键词:low-light image enhancement;HVI color space;attention mechanism;Coordinate grouping;dilated convolution140|112|0更新时间:2026-02-11

摘要:ObjectiveImages captured under low-light conditions often suffer from various visual quality degradation issues, including uneven illumination, significant loss of structural and textural details, and severe color distortions. These issues not only compromise human visual perception but also pose serious challenges for high-level vision tasks such as object detection and recognition. While numerous low-light image enhancement (LLIE) techniques have been developed to address these problems, most existing methods primarily concentrate on improving brightness or contrast. As a result, they often overlook the critical aspect of accurate color restoration, leading to undesired artifacts such as color casts, overenhancement, or structural inconsistencies. Conventional approaches, such as Retinex-based models, enhance image brightness by decomposing images into reflectance and illumination components. However, this strategy tends to produce color distortions, particularly in extremely low-light scenarios in which the lack of information causes the reflectance component to be inaccurately estimated. Deep learning-based methods have emerged as a promising alternative given their ability to learn complex mappings between low- and normal-light domains. Nonetheless, many of these models are characterized by large network sizes, high computational costs, and suboptimal generalization to diverse illumination conditions, making them minimally suitable for practical deployment on resource-constrained platforms.MethodTo overcome the aforementioned challenges, we propose a novel LLIE framework named multiscale dilated convolution with coordinate grouping enhancement network (MCCNet). MCCNet is designed with a clear emphasis on brightness restoration and color correction, ensuring visually pleasing and natural-looking outputs. The architecture comprises two independently designed yet interactively trained branches: a color conversion branch and a detail enhancement branch. The color conversion branch operates in a hue-value-intensity (HUI) color space, which is more perceptually aligned with human vision than traditional RGB representations. We introduce a color adaptation factor that is dynamically learned on the basis of pixel-wise weight perception to effectively decouple and modulate the color and brightness components of images. This decoupling enables targeted enhancement of color fidelity without introducing additional artifacts or distortion. In the enhancement branch, we design a global grouped coordinate attention (GGCA) module to improve feature expressiveness and encourage effective cross-branch information exchange. GGCA selectively emphasizes meaningful features while suppressing irrelevant or noisy signals, which is especially beneficial for images with high levels of darkness and low signal-to-noise ratios. Furthermore, we propose a multiscale dilated fusion attention (MDFA) module that aggregates features across multiple dilation rates. This module enables the network to capture global context and fine-grained local structure, leading to precise brightness recovery and edge preservation. By fusing multiscale features, MDFA mitigates the typical blurring and oversmoothing problems that occur in conventional convolutional enhancement networks. The two branches of MCCNet work collaboratively to generate high-quality enhanced images. The color conversion branch ensures accurate chromatic consistency, while the enhancement branch restores luminance and structure details. This dual-branch strategy effectively addresses the common trade-off between brightness enhancement and color fidelity that limits many existing LLIE methods.ResultTo thoroughly evaluate the performance of the proposed MCCNet, we conduct comprehensive experiments on multiple public benchmarks, including LOLv1, LOLv2, and five unpaired real-world low-light datasets. In addition, we test the model on two extremely low-light subsets to assess its robustness under severe lighting degradation. MCCNet is compared against 15 state-of-the-art LLIE methods, including traditional models like RetinexNet and zero-reference deep curve estimation(Zero-DCE), as well as recent deep learning approaches such as enlightening generative adversarial networks for low-light image enhancement(EnlightGAN), unsupervised retinex network(URetinex-Net), generative diffusion prior for unified image restoration and enhancement(GDP), lightening diffusion for low-light image enhancement(LightenDiffusion), and CIDNet. Quantitative results demonstrate that MCCNet achieves superior performance in terms of peak signal-to-noise ratio (PSNR) and structural similarity index (SSIM). Specifically, MCCNet improves PSNR by 1.57 dB compared with the best-performing baseline on the LOLv1 dataset. Our method also achieves the highest SSIM score, indicating better structural preservation and perceptual quality. Qualitative comparisons reveal that MCCNet produces images with more natural lighting, reduced noise, and vivid, realistic colors, even in complex scenes with significant shadows or saturation challenges. In terms of computational complexity, MCCNet contains only 1.98 million parameters, and FLOPs are limited to 8.06 G. This dramatic reduction in model size and computational load makes MCCNet highly suitable for deployment on edge devices, mobile platforms, or real-time applications where efficiency is critical.ConclusionThe proposed color space-based MCCNet effectively restores brightness and color, addresses color cast and artifacts, and excels at lighting adjustment and noise suppression, achieving high-quality visual enhancement. Moreover, the method fully considers the practical demands of computational complexity and model size. The MCCNet model contains only 1.98 million parameters and requires 8.06 G FLOPs. While achieving state-of-the-art performance, it significantly reduces model size and computational cost. In particular, compared with classic image enhancement methods and recent advanced algorithms, MCCNet reduces model parameters and computational load by approximately 96% and 46% on average, respectively, demonstrating an efficient and lightweight design.关键词:low-light image enhancement;HVI color space;attention mechanism;Coordinate grouping;dilated convolution140|112|0更新时间:2026-02-11 - “本文介绍了盲源分离(BSS)及其在图像处理领域中的应用盲图像分离(BIS)的背景和重要性,指出在复杂图像分离任务中,基于统计约束的算法存在局限性。”

摘要:ObjectiveBlind image separation (BIS) is the application of blind source separation in the domain of image processing. It refers to the inverse problem of simultaneously estimating and restoring multiple independent source images from a single observation image under conditions of unknown mixing mode and without prior knowledge of the source images. Typical applications include bad weather (rain, snow) removal and reflection/shadow layer separation. Traditional methods, represented by independent component analysis and its improved algorithms, rely on strong assumptions such as “statistical independence of source signals” and “non-Gaussianity,” which often fail for real images owing to strong feature correlation and nonlinear mixing. Although sparse coding and low-rank decomposition, which emerged later, introduced prior constraints, they remained difficult for characterizing complex textures and structures. In recent years, deep learning frameworks have gradually dominated: convolutional neural networks (CNNs) achieved efficient feature extraction with local receptive fields. Generative adversarial networks (GANs) circumvented explicit priors through adversarial training, and variants such as CycleGAN, Attention-GAN, and Transformer-GAN have been proposed successively, achieving significant progress on synthetic datasets. However, in complex real scenes, existing methods based on CNNs and GANs still exhibit insufficient performance in processing such mixed images. The reasons are as follows: 1) uncertainty in source feature distribution (features within the same source category vary significantly in shape, transparency, and scale, resulting in modeling difficulties); 2) complex image mixing (nonlinearity, crosstalk between channels, making the “reverse mapping” nonunique); and 3) irregular noise interference (sensor noise, compression artifacts, motion blur coupled with the source signal, further blurring the separable boundary). These factors make it difficult for models to characterize the complex and variable feature distribution of source images in real scenes. Consequently, under strong noise, nonlinear mixing, and highly coupled texture details, problems, including source image separation estimation bias, texture distortion, and artifact residue, arise. These problems negatively impact the effectiveness of image restoration. To address these challenges, this study proposes a novel dual-channel diffusion separation model (DCDSM). It leverages the powerful generative ability of diffusion models to handle complex mixed images effectively.MethodDCDSM consists of a dual-branch structure based on a conditional diffusion model. This model exploits the diffusion process to learn the feature distribution of the source images, enabling the reconstruction of the feature structure for initial separation. During the reverse denoising process of the dual-branch diffusion, the design of the wavelet suppression module (WSM) is grounded in the characteristic of mutual coupling noise between the two source images. The structure of the interactive dual-branch separation network enhances the separation of detailed information within mixed images. WSM is composed of two independent wavelet frequency-domain feature extraction networks (WFENs). A WFEN employs a two-dimensional discrete wavelet transform to process high- and low-frequency sub-bands in the time-frequency domain to obtain suppression information. In the time domain, an encoder-decoder structure is utilized to capture global contextual features and reconstruct local details, thereby enhancing the texture and edge information in the low-frequency sub-bands. In the frequency domain, a window-based frequency channel attention mechanism is introduced to process the high-frequency sub-bands. Finally, a two-dimensional wavelet inverse transform is employed to integrate the outputs from both branches to obtain the suppression information. Simultaneously, the noise output from the intermediate process of the other branch is subtracted pixel-wise from the suppression information to decouple the two source images, thereby further improving the model’s performance in image separation tasks.ResultDCDSM is validated through the construction of synthetic datasets from diverse application scenarios, encompassing rain removal, snow removal, and the simulation of complex mixtures. The experimental results are as follows: 1) in the tasks of rain and snow image restoration, DCDSM achieves quantitative metrics (PSNR/SSIM) of 35.002 3 dB/0.954 9 and 29.810 8 dB/0.924 3, respectively, demonstrating an average improvement of 1.257 0 dB/0.927 2 dB (PSNR) and 0.026 2/0.028 9 (SSIM) over the current state-of-the-art methods. 2) For the dual-blind separation of complex mixed images, the restored dual-source images exhibit significantly better texture fidelity and detail integrity compared with those from other methods, with PSNR and SSIM metrics reaching 25.004 9 dB and 0.799 7, respectively, surpassing those of the comparative methods by 4.124 9 dB and 0.092 6, respectively. Ablation experiments validate the effectiveness of the proposed modules and the interpretability and rationality of the selected hyperparameters.ConclusionThe experiments of DCDSM on rain, snow, and complex mixed datasets verify the effectiveness of the method in the task of dual-channel BIS. Experimental results show that the proposed method achieves the best subjective and objective indicators compared with other methods and solves the problems of residual rain/snow lines and blurred texture edge details in real complex separation scenes.关键词:blind image separation(BIS);diffusion model(DM);wavelet transform;Fourier transform;image restoration54|89|0更新时间:2026-02-11