最新刊期

卷 31 , 期 1 , 2026

Scholar View

- “#论文摘要#,介绍了其在人工智能领域的研究进展,xx专家探索了智能算法优化课题,为提升算法效率提供解决方案。”

- “介绍了其在人工智能安全与治理领域的研究进展,相关专家从多种维度对现有生成图像检测方法进行分类与分析,为解决生成图像检测难题提供了新思路。”

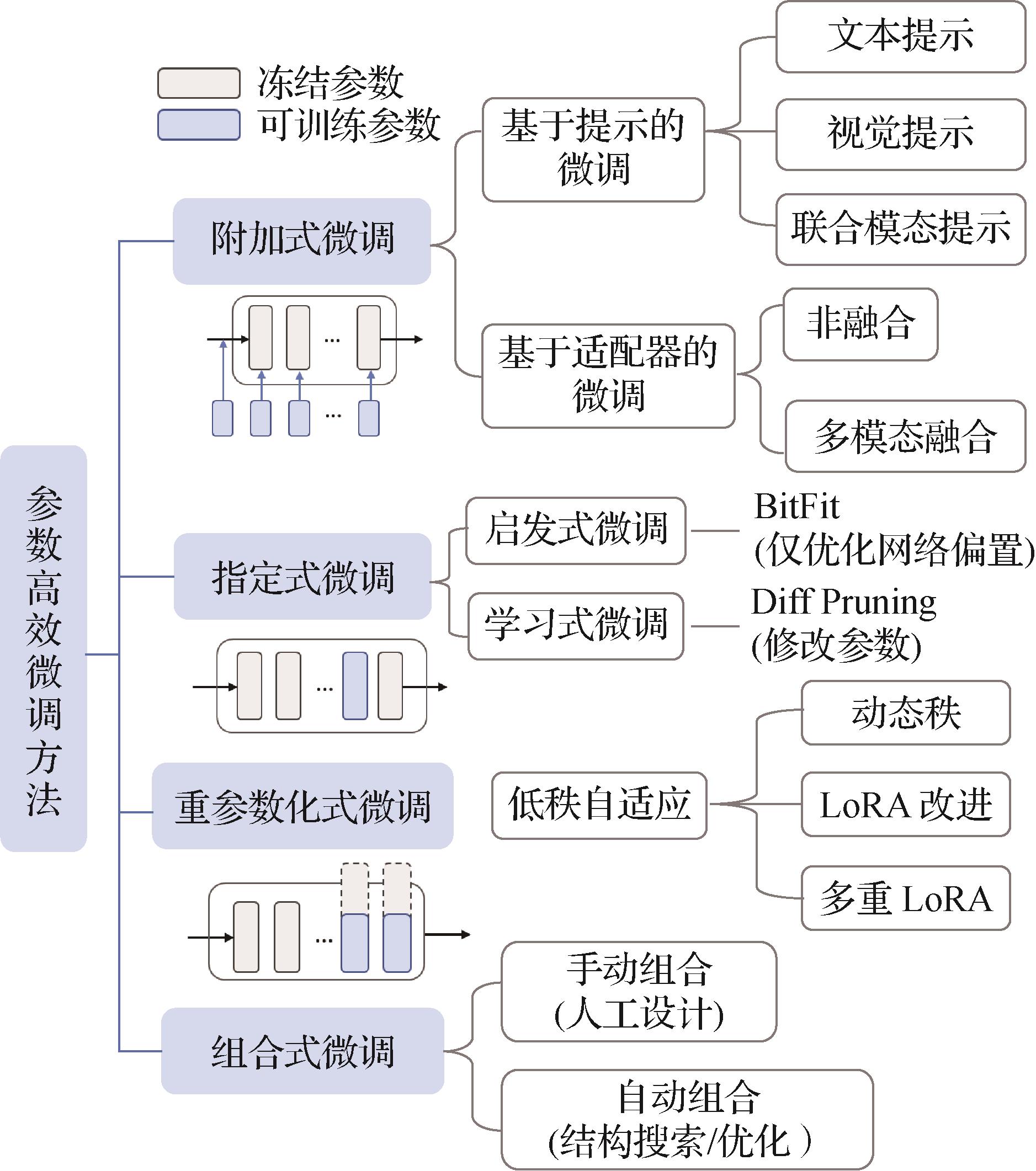

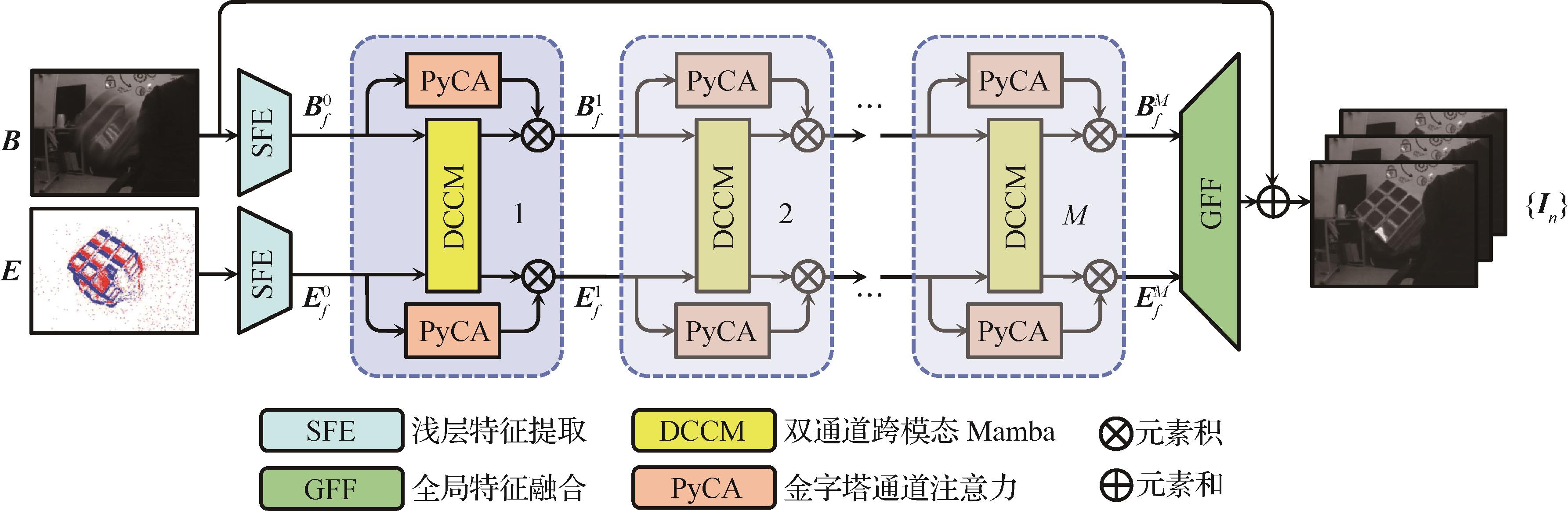

摘要:In the era of rapid advancements in generative artificial intelligence, the explosive growth of artificial intelligence-generated content (AIGC), propelled by the widespread use of models such as ChatGPT and diffusion models, has given rise to significant challenges. The proliferation of false information, fraudulent content, and inappropriate remarks has severely undermined the authenticity and credibility of information dissemination, highlighting the urgent need for effective artificial intelligence-generated images (AIGI) detection. The detection of AIGI, which aims to determine whether an image is generated by a generative model, is a crucial research direction in the field of AI security and governance. Early detection methods focused on detecting the content generated by generative adversarial networks. In recent years, images generated by diffusion models have received extensive attention, and related detection methods have emerged, demonstrating superior performance. However, current detection methods still encounter difficulties in achieving high-generalization and robust detection performance due to the diversity of generative model structures, the complexity of generated images, and the uncertainty of post-processing operations on generated images. This comprehensive review aims to provide a thorough understanding of the research landscape in AIGI detection. The paper first commences with an in-depth exploration of mainstream image-generation models. Generative adversarial networks, with their adversarial training mechanism between generators and discriminators, have evolved through various architectures such as conditional GAN (CGAN), CycleGAN, and StyleGAN, each enhancing the controllability and quality of generated images. Autoregressive models, inspired by the success of GPT-series in natural language processing, such as Image GPT and DALL-E, leverage powerful attention mechanisms for image generation. Based on the principles of diffusion and reverse diffusion processes, diffusion models have demonstrated remarkable capabilities in generating high-quality images, leading to the emergence of numerous models such as Stable Diffusion and its variants in recent years. Understanding these models is fundamental because their unique characteristics leave identifiable patterns in the generated images, which detection methods aim to identify. Subsequently, existing AIGI detection methods are systematically categorized from multiple perspectives, including supervision paradigm, learning methods, detection basis, backbone network, technical means, and interpretability. In terms of the supervision paradigm, most methods are based on supervised detection, with some incorporating additional contrastive learning losses or regularization terms to improve performance. Though less common, semi-supervised and unsupervised detection methods also contribute to the field. Regarding learning methods, eager learning-based approaches, especially those that using binary classifiers, are dominant, while lazy learning-based methods, such as those relying on the K-nearest neighbors algorithm, offer an alternative without requiring extensive training. When categorized by detection basis, methods based on pixel-domain features use characteristics such as texture, color, and reconstruction error. Frequency domain-based methods detect AIGI through the analysis of unique frequency patterns of generated images, such as the spectrum replication caused by upsampling in GAN models. Methods based on pre-trained model features leverage the powerful feature extraction capabilities of large pre-trained models such as contrastive language-image pre-training(CLIP) and Vision Transformer. Fusion feature-based methods combine different types of features, either from single-modality (e.g., fusing pixel and frequency domain features) or multi-modality (e.g., integrating image and text features), to improve detection accuracy. In contrast, rule-based methods rely on differences in the sensitivity of real and generated images to certain operations for detection. According to backbone network, early studies rely on traditional machine learning models such as random forest, support vector machine, and logistic regression. Convolutional neural networks such as ResNet have later become increasingly popular due to their powerful capabilities on local features. With the advent of pre-trained large models, visual language models such as CLIP, masked autoencoder(MAE), and large language and vision assistant(LLaVa) are increasingly being used as backbone networks, often fine-tuned with techniques such as LoRA to adapt to the detection task. Aiming to enhance the efficiency and effectiveness of detection algorithms, various technical means are employed, including incremental learning, knowledge distillation, transfer learning, ensemble learning, and few-shot learning. Additionally, data augmentation methods such as JPEG compression, Gaussian blurring, and random flipping are used to increase the diversity of training samples and improve the generalization capability of the detection model. In terms of interpretability of detection methods, several techniques have been developed. Layer-wise relevance propagation analyzes the contribution of each layer in the neural network to the final decision, helping to understand model prediction. Genetic programming optimizes the model structure to improve interpretability. Local interpretable model-agnostic explanations clarifies model’s predictions at the local level without relying on the specific model structure. Grad-CAM generates visual explanations by highlighting the important regions in the image for the model’s decision-making. Using the detection basis as the main classification criterion, a detailed introduction and analysis of the research status are presented. A detailed summary of benchmark datasets for general AIGI detection is then provided. These datasets substantially vary in structure, scale, image content, and the types of generators they cover. Some datasets are designed for specific types of images or generators. For example, several datasets (such as Forensic Synthetics) are dedicated to GAN-generated image detection, while others (such as Synthbuster) focus on detecting images generated by diffusion models. Comprehensive datasets can also be used for detecting images generated by multiple types of generators. The diversity in datasets reflects the complexity of the AIGI detection task and the need for a standardized evaluation environment. Moreover, the evaluation dimensions of detection methods, namely in-domain accuracy, out-of-domain generalization, and robustness, are introduced in detail. In-domain accuracy is measured using metrics such as accuracy, precision, recall, and average precision, which measure the performance of detection methods on images generated by generative models included in the training set. In contrast, out-of-domain generalization evaluates the performance of detection methods on unknown generative models and categories outside the training set, which is crucial with the constant emergence of new generative models. This dimension is evaluated through cross-generator, cross-category, and other types of generalization tests. Robustness assesses the capability of detection methods to resist various image post-processing operations, including JPEG compression, Gaussian blurring, and adversarial sample attacks. Furthermore, a horizontal comparison of representative detection methods is conducted, and the results show that different methods exhibit diverse generalization capabilities. The choice of backbone network and training set substantially impacts detection results. For example, some methods perform better on specific generative models, while others demonstrate higher accuracy across multiple generative models. Detection methods based on fusion features generally exhibit superior performance in terms of detection accuracy and generalization. Finally, the paper highlights the challenges and open problems in the current AIGI detection field. These challenges include the construction of large-scale unbiased datasets, because the bias in existing training data can reduce the robustness and generalization of detection models. Designing robust detection methods against physical-level attacks, such as printing and social network-based image processing in real-world scenarios, is also necessary. Moreover, creating comprehensive detection methods that can effectively handle a variety of generative models is crucial to keep up with their rapid advancements. Additionally, research on anti-forensics attacks against detection methods is essential because it can help improve the resilience of detection techniques. Future research directions are outlined to address these challenges and drive the continuous development of AIGI detection technologies.关键词:artificial intelligence security;artificial intelligence-generated image(AIGI) detection;digital forensics;image forensics;DeepFake detection;image generative model;deep learning1144|512|0更新时间:2026-02-02

摘要:In the era of rapid advancements in generative artificial intelligence, the explosive growth of artificial intelligence-generated content (AIGC), propelled by the widespread use of models such as ChatGPT and diffusion models, has given rise to significant challenges. The proliferation of false information, fraudulent content, and inappropriate remarks has severely undermined the authenticity and credibility of information dissemination, highlighting the urgent need for effective artificial intelligence-generated images (AIGI) detection. The detection of AIGI, which aims to determine whether an image is generated by a generative model, is a crucial research direction in the field of AI security and governance. Early detection methods focused on detecting the content generated by generative adversarial networks. In recent years, images generated by diffusion models have received extensive attention, and related detection methods have emerged, demonstrating superior performance. However, current detection methods still encounter difficulties in achieving high-generalization and robust detection performance due to the diversity of generative model structures, the complexity of generated images, and the uncertainty of post-processing operations on generated images. This comprehensive review aims to provide a thorough understanding of the research landscape in AIGI detection. The paper first commences with an in-depth exploration of mainstream image-generation models. Generative adversarial networks, with their adversarial training mechanism between generators and discriminators, have evolved through various architectures such as conditional GAN (CGAN), CycleGAN, and StyleGAN, each enhancing the controllability and quality of generated images. Autoregressive models, inspired by the success of GPT-series in natural language processing, such as Image GPT and DALL-E, leverage powerful attention mechanisms for image generation. Based on the principles of diffusion and reverse diffusion processes, diffusion models have demonstrated remarkable capabilities in generating high-quality images, leading to the emergence of numerous models such as Stable Diffusion and its variants in recent years. Understanding these models is fundamental because their unique characteristics leave identifiable patterns in the generated images, which detection methods aim to identify. Subsequently, existing AIGI detection methods are systematically categorized from multiple perspectives, including supervision paradigm, learning methods, detection basis, backbone network, technical means, and interpretability. In terms of the supervision paradigm, most methods are based on supervised detection, with some incorporating additional contrastive learning losses or regularization terms to improve performance. Though less common, semi-supervised and unsupervised detection methods also contribute to the field. Regarding learning methods, eager learning-based approaches, especially those that using binary classifiers, are dominant, while lazy learning-based methods, such as those relying on the K-nearest neighbors algorithm, offer an alternative without requiring extensive training. When categorized by detection basis, methods based on pixel-domain features use characteristics such as texture, color, and reconstruction error. Frequency domain-based methods detect AIGI through the analysis of unique frequency patterns of generated images, such as the spectrum replication caused by upsampling in GAN models. Methods based on pre-trained model features leverage the powerful feature extraction capabilities of large pre-trained models such as contrastive language-image pre-training(CLIP) and Vision Transformer. Fusion feature-based methods combine different types of features, either from single-modality (e.g., fusing pixel and frequency domain features) or multi-modality (e.g., integrating image and text features), to improve detection accuracy. In contrast, rule-based methods rely on differences in the sensitivity of real and generated images to certain operations for detection. According to backbone network, early studies rely on traditional machine learning models such as random forest, support vector machine, and logistic regression. Convolutional neural networks such as ResNet have later become increasingly popular due to their powerful capabilities on local features. With the advent of pre-trained large models, visual language models such as CLIP, masked autoencoder(MAE), and large language and vision assistant(LLaVa) are increasingly being used as backbone networks, often fine-tuned with techniques such as LoRA to adapt to the detection task. Aiming to enhance the efficiency and effectiveness of detection algorithms, various technical means are employed, including incremental learning, knowledge distillation, transfer learning, ensemble learning, and few-shot learning. Additionally, data augmentation methods such as JPEG compression, Gaussian blurring, and random flipping are used to increase the diversity of training samples and improve the generalization capability of the detection model. In terms of interpretability of detection methods, several techniques have been developed. Layer-wise relevance propagation analyzes the contribution of each layer in the neural network to the final decision, helping to understand model prediction. Genetic programming optimizes the model structure to improve interpretability. Local interpretable model-agnostic explanations clarifies model’s predictions at the local level without relying on the specific model structure. Grad-CAM generates visual explanations by highlighting the important regions in the image for the model’s decision-making. Using the detection basis as the main classification criterion, a detailed introduction and analysis of the research status are presented. A detailed summary of benchmark datasets for general AIGI detection is then provided. These datasets substantially vary in structure, scale, image content, and the types of generators they cover. Some datasets are designed for specific types of images or generators. For example, several datasets (such as Forensic Synthetics) are dedicated to GAN-generated image detection, while others (such as Synthbuster) focus on detecting images generated by diffusion models. Comprehensive datasets can also be used for detecting images generated by multiple types of generators. The diversity in datasets reflects the complexity of the AIGI detection task and the need for a standardized evaluation environment. Moreover, the evaluation dimensions of detection methods, namely in-domain accuracy, out-of-domain generalization, and robustness, are introduced in detail. In-domain accuracy is measured using metrics such as accuracy, precision, recall, and average precision, which measure the performance of detection methods on images generated by generative models included in the training set. In contrast, out-of-domain generalization evaluates the performance of detection methods on unknown generative models and categories outside the training set, which is crucial with the constant emergence of new generative models. This dimension is evaluated through cross-generator, cross-category, and other types of generalization tests. Robustness assesses the capability of detection methods to resist various image post-processing operations, including JPEG compression, Gaussian blurring, and adversarial sample attacks. Furthermore, a horizontal comparison of representative detection methods is conducted, and the results show that different methods exhibit diverse generalization capabilities. The choice of backbone network and training set substantially impacts detection results. For example, some methods perform better on specific generative models, while others demonstrate higher accuracy across multiple generative models. Detection methods based on fusion features generally exhibit superior performance in terms of detection accuracy and generalization. Finally, the paper highlights the challenges and open problems in the current AIGI detection field. These challenges include the construction of large-scale unbiased datasets, because the bias in existing training data can reduce the robustness and generalization of detection models. Designing robust detection methods against physical-level attacks, such as printing and social network-based image processing in real-world scenarios, is also necessary. Moreover, creating comprehensive detection methods that can effectively handle a variety of generative models is crucial to keep up with their rapid advancements. Additionally, research on anti-forensics attacks against detection methods is essential because it can help improve the resilience of detection techniques. Future research directions are outlined to address these challenges and drive the continuous development of AIGI detection technologies.关键词:artificial intelligence security;artificial intelligence-generated image(AIGI) detection;digital forensics;image forensics;DeepFake detection;image generative model;deep learning1144|512|0更新时间:2026-02-02 -

摘要:In recent years, the number of neural network models has increased rapidly, and artificial intelligence technology represented by neural networks has achieved great success in many application fields. Neural network models inherently contain considerable redundant information. This redundancy creates favorable conditions for hiding confidential data. Therefore, neural network models can be used as covers for covert communication. This new paradigm is called neural network model steganography (model steganography). The steganographer chooses the location where confidential information is embedded in the model and uses a key to embed the confidential information into the model for transmission. The receiver uses the shared key to extract the confidential information in the location where it is embedded. Model steganography is used for covert communication without detection. In recent years, neural network model steganography technology has made great progress. In practice, it can be applied in some scenarios, such as military defense or secret communication between intelligence agencies, embedding confidential information in the model training process or hiding secret tasks in the model. In command distribution, the commander intends to send different commands to multiple officers, or multiple officers send different messages to the commander. Using model steganography allows transferring confidential information without being detected. Meanwhile, by modifying model parameters, malicious developers can embed malicious software into the benign model, resulting in the loss of model users. Using neural network backdoor technology to poison the target model enables performing different tasks defined by the attackers without the users’ knowledge. Technologies related to model steganography include model watermarking and multimedia steganography based on a neural network model. The model watermark takes the neural network as the protection object and embeds the digital watermark in the model to protect the intellectual property rights of the model owner. The watermark information embedded in the model can be extracted correctly without affecting the normal use of the cover model and without deliberately concealing the existence of the watermark information. In addition, the embedding capacity can accommodate the watermark information, so there is no need to pursue large capacity. Multimedia steganography based on a neural network model takes multimedia data as covers and the neural network model as a tool for information embedding and extraction and uses the neural network in each stage of embedding and extraction to embed confidential information in multimedia data. In terms of concealment, model steganography has unique advantages compared with its related technologies. The steganography of the model is naturally hidden. The model itself is a complex set of high-dimensional parameters, so a small number of parameter disturbances in the model are difficult to detect. Model steganography is usually achieved by modifying redundant parameters, which will not affect the function of the model. Regarding embedding capacity, model steganography has the potential of supercapacity compared with its related technologies. Model steganography can use parameter redundancy to embed data, and the neural network has a large number of parameters, so it can embed substantial information even if the minimum proportion parameters are modified. In accordance with the different strategies of model steganography, the existing methods can be divided into three categories: model steganography based on training, modification, and backdoor technology. Most of the results of model steganography are training-based model steganography. The main idea of training-based model steganography is to embed confidential information in the process of the training model. In the hidden layer of the model, the sender first selects the weight used to embed the confidential information and then embeds the confidential information into the model under the key function through the training model. In the output layer, the model output is required to be as similar as the confidential information as possible, and the model weight is constantly updated under the guidance of the confidential information. The basic idea of model steganography based on modification is to modify the model parameters to match the confidential information to achieve the purpose of embedding confidential information. Malicious payloads can be embedded without significantly affecting the model performance by replacing malware bytes or mapping model parameters to hide malware in the model. At the sending end, malicious developers choose to modify the location of model parameters to embed malicious software into the model. At the receiving end, they determine the location where the malicious software is embedded in the model parameters, extract the malicious software, check the integrity, and run the malicious software. Model steganography based on backdoor technology uses backdoor technology. Attackers bury backdoors in the model, making the infected model behave normally in general. However, when the backdoor trigger is activated, the output of the model will become the malicious target set by the attacker in advance. This method poisons the target model and can extract additional information from the output of the model. For the analysis method of model steganalysis, on the basis of whether the steganalyzer needs to master the internal details of the neural network model, current model steganalysis algorithms can be classified as white and black box model steganalysis. White box model steganalysis means that the analyst has knowledge and access rights to the internal structure and parameters of the model to detect and analyze the confidential information hidden in the model. Black box model steganalysis treats the target model as a “black box”, without accessing its internal structure and weight parameter details, to detect and analyze whether the model contains secret. To review the latest developments and trends, this study analyzes advanced methodologies in model steganography as follows: 1) it introduces the purpose and goal of model steganography, as well as its basic concepts, evaluation indicators, and technology classification. 2) The development status of model steganography is summarized and analyzed. 3) The advantages and disadvantages are compared and evaluated. 4) The development trend of model steganography is explored.关键词:steganography;model steganography;steganalysis;neural network;information hiding232|163|0更新时间:2026-02-02

摘要:In recent years, the number of neural network models has increased rapidly, and artificial intelligence technology represented by neural networks has achieved great success in many application fields. Neural network models inherently contain considerable redundant information. This redundancy creates favorable conditions for hiding confidential data. Therefore, neural network models can be used as covers for covert communication. This new paradigm is called neural network model steganography (model steganography). The steganographer chooses the location where confidential information is embedded in the model and uses a key to embed the confidential information into the model for transmission. The receiver uses the shared key to extract the confidential information in the location where it is embedded. Model steganography is used for covert communication without detection. In recent years, neural network model steganography technology has made great progress. In practice, it can be applied in some scenarios, such as military defense or secret communication between intelligence agencies, embedding confidential information in the model training process or hiding secret tasks in the model. In command distribution, the commander intends to send different commands to multiple officers, or multiple officers send different messages to the commander. Using model steganography allows transferring confidential information without being detected. Meanwhile, by modifying model parameters, malicious developers can embed malicious software into the benign model, resulting in the loss of model users. Using neural network backdoor technology to poison the target model enables performing different tasks defined by the attackers without the users’ knowledge. Technologies related to model steganography include model watermarking and multimedia steganography based on a neural network model. The model watermark takes the neural network as the protection object and embeds the digital watermark in the model to protect the intellectual property rights of the model owner. The watermark information embedded in the model can be extracted correctly without affecting the normal use of the cover model and without deliberately concealing the existence of the watermark information. In addition, the embedding capacity can accommodate the watermark information, so there is no need to pursue large capacity. Multimedia steganography based on a neural network model takes multimedia data as covers and the neural network model as a tool for information embedding and extraction and uses the neural network in each stage of embedding and extraction to embed confidential information in multimedia data. In terms of concealment, model steganography has unique advantages compared with its related technologies. The steganography of the model is naturally hidden. The model itself is a complex set of high-dimensional parameters, so a small number of parameter disturbances in the model are difficult to detect. Model steganography is usually achieved by modifying redundant parameters, which will not affect the function of the model. Regarding embedding capacity, model steganography has the potential of supercapacity compared with its related technologies. Model steganography can use parameter redundancy to embed data, and the neural network has a large number of parameters, so it can embed substantial information even if the minimum proportion parameters are modified. In accordance with the different strategies of model steganography, the existing methods can be divided into three categories: model steganography based on training, modification, and backdoor technology. Most of the results of model steganography are training-based model steganography. The main idea of training-based model steganography is to embed confidential information in the process of the training model. In the hidden layer of the model, the sender first selects the weight used to embed the confidential information and then embeds the confidential information into the model under the key function through the training model. In the output layer, the model output is required to be as similar as the confidential information as possible, and the model weight is constantly updated under the guidance of the confidential information. The basic idea of model steganography based on modification is to modify the model parameters to match the confidential information to achieve the purpose of embedding confidential information. Malicious payloads can be embedded without significantly affecting the model performance by replacing malware bytes or mapping model parameters to hide malware in the model. At the sending end, malicious developers choose to modify the location of model parameters to embed malicious software into the model. At the receiving end, they determine the location where the malicious software is embedded in the model parameters, extract the malicious software, check the integrity, and run the malicious software. Model steganography based on backdoor technology uses backdoor technology. Attackers bury backdoors in the model, making the infected model behave normally in general. However, when the backdoor trigger is activated, the output of the model will become the malicious target set by the attacker in advance. This method poisons the target model and can extract additional information from the output of the model. For the analysis method of model steganalysis, on the basis of whether the steganalyzer needs to master the internal details of the neural network model, current model steganalysis algorithms can be classified as white and black box model steganalysis. White box model steganalysis means that the analyst has knowledge and access rights to the internal structure and parameters of the model to detect and analyze the confidential information hidden in the model. Black box model steganalysis treats the target model as a “black box”, without accessing its internal structure and weight parameter details, to detect and analyze whether the model contains secret. To review the latest developments and trends, this study analyzes advanced methodologies in model steganography as follows: 1) it introduces the purpose and goal of model steganography, as well as its basic concepts, evaluation indicators, and technology classification. 2) The development status of model steganography is summarized and analyzed. 3) The advantages and disadvantages are compared and evaluated. 4) The development trend of model steganography is explored.关键词:steganography;model steganography;steganalysis;neural network;information hiding232|163|0更新时间:2026-02-02 - “深度神经网络在各领域广泛应用,但对抗样本威胁其可靠性和安全性。专家将对抗攻击技术分为白盒和黑盒攻击,详细介绍了对抗防御策略,为提升深度神经网络安全性提供解决方案。”

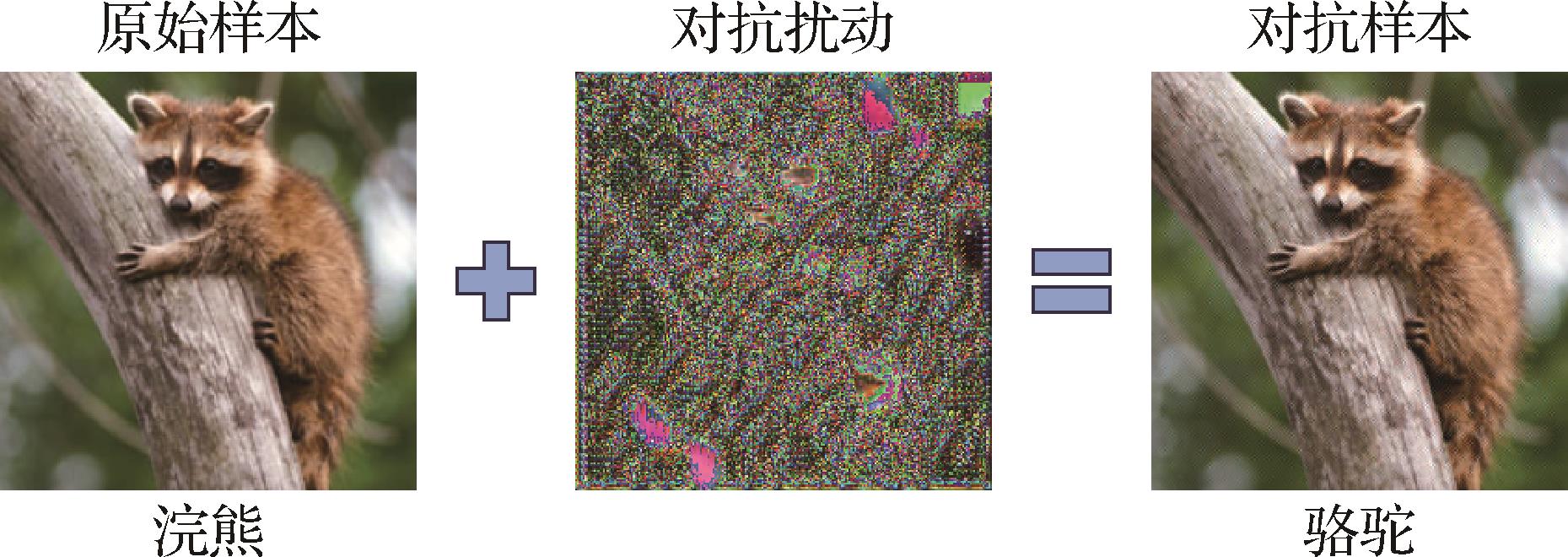

摘要:Due to their excellent performance, deep neural networks are increasingly utilized in various aspects of daily life, playing a crucial role in decision-making and providing assistance across various fields. However, the discovery of adversarial example poses a considerable threat to the reliability and security of deep neural networks, markedly limiting their application in safety-critical areas. Therefore, in recent years, researchers have conducted extensive studies on adversarial examples. This paper first introduces the research background and definitions related to adversarial examples, and categorizes existing adversarial attack techniques into white-box and black-box attacks. White-box attacks assume full transparency of the target model during the generation of adversarial examples, where the attack algorithm has access to the model’s architecture, parameters, loss function, and gradients to craft adversarial examples. Black-box attacks refer to scenarios where the target model is treated as opaque during the generation of adversarial examples. The attack algorithm has no access to internal information, such as the model’s architecture or parameters, and can only interact with the model through input-output queries. In contrast, white-box attacks can be categorized into three types based on their approach: optimization-based, gradient-based, and generation-based attacks. Specifically, optimization-based attacks formulate the generation of adversarial examples as a constrained optimization problem, which is approximately solved using optimization algorithms. Their main advantage lies in realizing high attack success rates with relatively small perturbations; however, they often incur substantial computational and time costs. Gradient-based attacks generate adversarial examples by computing perturbations in the direction of the loss function’s gradient, scaled by a predefined step size. These methods notably improve the efficiency of adversarial example generation but require continuous access to the model’s gradients. Meanwhile, generation-based attacks aim to produce adversarial examples by designing and training a generative model to attack the target model. Once trained, the generative model can efficiently generate adversarial examples without requiring access to the gradients or internal parameters of the target model, making it highly suitable for large-scale adversarial example generation. Black-box attacks can be categorized into two types: transfer-based and query-based attacks. Specifically, transfer-based attacks exploit the transferability of adversarial examples across different models by generating adversarial inputs against a surrogate model, which are then used to attack the target black-box model. The main advantage of this approach is that it does not require access to the target model or its internal information, resulting in low computational cost. However, differences between the surrogate and target models can lead to instability in attack performance. Query-based attacks work by gradually refining adversarial examples through repeated interactions with the target model. By submitting inputs and analyzing the corresponding outputs, these attacks aim to mislead the model without requiring any knowledge of its internal architecture. While they often achieve high success rates, their efficiency is hindered by the substantial number of queries required. With the continuous development of adversarial example generation algorithms, an increasing number of defense methods have emerged. The most widely adopted defense techniques can generally be categorized into three groups: adversarial training, adversarial detection, and adversarial denoising. Specifically, adversarial training is considered one of the most effective defense strategies against adversarial attacks. This training involves incorporating adversarial examples into the training process of the target model to enhance its robustness against adversarial perturbations. This approach is generally applicable and does not rely on specific attack methods; however, it suffers from high computational cost. In contrast to adversarial training, adversarial detection does not adjust the parameters of the target model. Instead, this detection introduces an auxiliary classifier to determine whether an input is an adversarial example. This approach offers good integrability with existing models; however, its generalization capability is often limited. Unlike adversarial training and adversarial detection, adversarial denoising focuses on eliminating adversarial perturbations from input data to prevent them from misleading the target model. The main advantage of adversarial denoising lies in the potential to restore adversarial examples to their original form; however, this process can occasionally degrade the quality of clean, unaltered inputs. Adversarial example techniques not only reveal the vulnerabilities of deep learning models but also inspire innovative applications in security-sensitive tasks. For instance, adversarial perturbations have been utilized to enhance system resilience in scenarios such as adversarial steganography, adversarial watermarking, model protection, and multimedia authentication. These cross-task explorations introduce new directions for adversarial example research and contribute to improving the practical deployment of artificial intelligence (AI) systems in complex environments. Therefore, this paper reviews various applications of adversarial examples within the fields of steganography and digital watermarking. Furthermore, a diverse set of adversarial example generation methods is selected and systematically evaluated in terms of attack success rate, robustness, and transferability across different target network architectures and benchmark datasets, including Caltech-256 and ImageNet. Finally, the study identifies current limitations in existing adversarial example research and highlights promising directions for future exploration, such as cross-domain adversarial attacks, robustness evaluation, real-world attack scenarios, and the development of systematic defense frameworks.关键词:adversarial example(AE);adversarial attack;adversarial defense;adversarial steganography;adversarial watermark600|270|0更新时间:2026-02-02

摘要:Due to their excellent performance, deep neural networks are increasingly utilized in various aspects of daily life, playing a crucial role in decision-making and providing assistance across various fields. However, the discovery of adversarial example poses a considerable threat to the reliability and security of deep neural networks, markedly limiting their application in safety-critical areas. Therefore, in recent years, researchers have conducted extensive studies on adversarial examples. This paper first introduces the research background and definitions related to adversarial examples, and categorizes existing adversarial attack techniques into white-box and black-box attacks. White-box attacks assume full transparency of the target model during the generation of adversarial examples, where the attack algorithm has access to the model’s architecture, parameters, loss function, and gradients to craft adversarial examples. Black-box attacks refer to scenarios where the target model is treated as opaque during the generation of adversarial examples. The attack algorithm has no access to internal information, such as the model’s architecture or parameters, and can only interact with the model through input-output queries. In contrast, white-box attacks can be categorized into three types based on their approach: optimization-based, gradient-based, and generation-based attacks. Specifically, optimization-based attacks formulate the generation of adversarial examples as a constrained optimization problem, which is approximately solved using optimization algorithms. Their main advantage lies in realizing high attack success rates with relatively small perturbations; however, they often incur substantial computational and time costs. Gradient-based attacks generate adversarial examples by computing perturbations in the direction of the loss function’s gradient, scaled by a predefined step size. These methods notably improve the efficiency of adversarial example generation but require continuous access to the model’s gradients. Meanwhile, generation-based attacks aim to produce adversarial examples by designing and training a generative model to attack the target model. Once trained, the generative model can efficiently generate adversarial examples without requiring access to the gradients or internal parameters of the target model, making it highly suitable for large-scale adversarial example generation. Black-box attacks can be categorized into two types: transfer-based and query-based attacks. Specifically, transfer-based attacks exploit the transferability of adversarial examples across different models by generating adversarial inputs against a surrogate model, which are then used to attack the target black-box model. The main advantage of this approach is that it does not require access to the target model or its internal information, resulting in low computational cost. However, differences between the surrogate and target models can lead to instability in attack performance. Query-based attacks work by gradually refining adversarial examples through repeated interactions with the target model. By submitting inputs and analyzing the corresponding outputs, these attacks aim to mislead the model without requiring any knowledge of its internal architecture. While they often achieve high success rates, their efficiency is hindered by the substantial number of queries required. With the continuous development of adversarial example generation algorithms, an increasing number of defense methods have emerged. The most widely adopted defense techniques can generally be categorized into three groups: adversarial training, adversarial detection, and adversarial denoising. Specifically, adversarial training is considered one of the most effective defense strategies against adversarial attacks. This training involves incorporating adversarial examples into the training process of the target model to enhance its robustness against adversarial perturbations. This approach is generally applicable and does not rely on specific attack methods; however, it suffers from high computational cost. In contrast to adversarial training, adversarial detection does not adjust the parameters of the target model. Instead, this detection introduces an auxiliary classifier to determine whether an input is an adversarial example. This approach offers good integrability with existing models; however, its generalization capability is often limited. Unlike adversarial training and adversarial detection, adversarial denoising focuses on eliminating adversarial perturbations from input data to prevent them from misleading the target model. The main advantage of adversarial denoising lies in the potential to restore adversarial examples to their original form; however, this process can occasionally degrade the quality of clean, unaltered inputs. Adversarial example techniques not only reveal the vulnerabilities of deep learning models but also inspire innovative applications in security-sensitive tasks. For instance, adversarial perturbations have been utilized to enhance system resilience in scenarios such as adversarial steganography, adversarial watermarking, model protection, and multimedia authentication. These cross-task explorations introduce new directions for adversarial example research and contribute to improving the practical deployment of artificial intelligence (AI) systems in complex environments. Therefore, this paper reviews various applications of adversarial examples within the fields of steganography and digital watermarking. Furthermore, a diverse set of adversarial example generation methods is selected and systematically evaluated in terms of attack success rate, robustness, and transferability across different target network architectures and benchmark datasets, including Caltech-256 and ImageNet. Finally, the study identifies current limitations in existing adversarial example research and highlights promising directions for future exploration, such as cross-domain adversarial attacks, robustness evaluation, real-world attack scenarios, and the development of systematic defense frameworks.关键词:adversarial example(AE);adversarial attack;adversarial defense;adversarial steganography;adversarial watermark600|270|0更新时间:2026-02-02 - “相关专家针对AIGC技术生成的高逼真伪造人脸视频问题,构建了面向中文场景的量化评估基准,建立了CHN-DF数据集,为推动防伪检测技术迭代发展提供数据支撑与实践指导。”

摘要:ObjectiveThe rapid advancement of AI-generated content (AIGC) technologies has enabled the creation of hyper-realistic synthetic face videos, posing unprecedented challenges to human visual perception systems. While existing face anti-spoofing detection algorithms demonstrate promising performance on Western-centric datasets, their effectiveness and applicability remain unverified in Chinese-specific contexts due to the lack of standardized evaluation benchmarks. This study aims to establish a quantitative assessment framework tailored for Chinese scenarios, driving the iterative development of anti-spoofing technologies.MethodAiming to address this issue, the CHN-DF dataset, the first large-scale benchmark dedicated to Chinese face video forgery detection, is proposed. The dataset construction process involves several critical stages, including data collection, fake sample generation, and quality assessment. Data collection was conducted in complex environmental settings, such as varying lighting conditions and occlusions, ensuring the diverse and challenging nature of the dataset. Over 190 000 dialogue clips from 2 540 real Chinese identity videos were collected across multiple sources, ensuring rich dataset variability. Twelve advanced AIGC tools that incorporate mainstream and cutting-edge methods based on diffusion, generative adversarial networks(GANs), and hybrid architectures are employed to generate the forged samples. These tools produced 434 727 mixed fake samples, covering seven types of manipulation techniques, such as expression transfer, lip-sync tampering, and audiovisual dissonance. Aiming to ensure data quality and integrity, human perception and automated detection techniques were employed in a dual-layer quality control process. This approach included an assessment framework that considers visual and auditory modalities to create a comprehensive evaluation standard that reflects the challenges encountered by anti-spoofing models in real-world applications. A system-level evaluation benchmark based on deep learning detection models was developed to further enhance the effectiveness of the dataset. This benchmark spans a variety of deepfake detection models, including state-of-the-art (SOTA) and widely used algorithms, to test the robustness and generalization capabilities of these models across different environmental and contextual factors. The goal was to test the adaptability of the detection algorithms to the complexities presented by the CHN-DF dataset.ResultThe CHN-DF dataset, which represents the world’s first Chinese language-based face video anti-spoofing dataset, contains a total of 434 727 samples. This dataset is designed to be diverse and complex, posing substantial challenges for current deepfake detection algorithms. In experimental evaluations using 16 different models, including SOTA and mainstream anti-spoofing models, the accuracy of detecting deepfake faces in this dataset was found to be lower than expected, thereby highlighting task complexity. Specifically, the accuracy for visual and audiovisual combined tests was below 85% and 70%, respectively. These results indicate the increased difficulty in accurately detecting deepfake faces in the CHN-DF dataset, demonstrating that current models are still far from perfect. Further analysis revealed the effectiveness of the evaluation benchmark in highlighting the limitations of existing algorithms. In cross-domain generalization tests, the performance of models fluctuated by an average of 19.6%, indicating substantial variations in model accuracy across different scenarios. This variability emphasizes the need for robust and adaptable algorithms that can handle diverse environmental conditions and complex manipulations in the CHN-DF dataset. Moreover, the evaluation benchmark demonstrated the need for integrating visual and auditory modalities for face video anti-spoofing detection. In numerous cases, models that relied solely on visual features failed to accurately detect fake videos, highlighting the importance of incorporating multimodal features for improved detection accuracy.ConclusionThe CHN-DF dataset and its associated evaluation benchmark effectively fill a critical gap in the field of face video anti-spoofing detection for Chinese language scenarios. Using systematic experimentation, this research clarifies the relationship between dataset characteristics and algorithm performance, elucidating the underlying mechanisms that contribute to the challenges faced by current models. Based on these findings, a roadmap for future improvements in the field is proposed, focusing on key directions such as enhancing model robustness and improving cross-modal generalization capabilities. These insights are crucial for the practical deployment of face video anti-spoofing detection technologies, offering theoretical support and practical guidance for future development. The CHN-DF dataset and evaluation protocol have been made publicly available on GitHub at https://github.com/HengruiLou/CHN-DF, serving as a valuable resource for researchers and practitioners in the field. This dataset serves not only as a technical reference but also as a catalyst for global collaboration in the fight against sophisticated, culturally specific deepfake threats. Future work will extend the dataset to include minority dialects. The dataset is linked at https://doi.org/10.57760/sciencedb.j00240.00067 and https://github.com/HengruiLou/CHN-DF.关键词:deepfake;deepfake face videos;face forgery evaluation benchmark;chinese dataset;multimodal186|199|0更新时间:2026-02-02

摘要:ObjectiveThe rapid advancement of AI-generated content (AIGC) technologies has enabled the creation of hyper-realistic synthetic face videos, posing unprecedented challenges to human visual perception systems. While existing face anti-spoofing detection algorithms demonstrate promising performance on Western-centric datasets, their effectiveness and applicability remain unverified in Chinese-specific contexts due to the lack of standardized evaluation benchmarks. This study aims to establish a quantitative assessment framework tailored for Chinese scenarios, driving the iterative development of anti-spoofing technologies.MethodAiming to address this issue, the CHN-DF dataset, the first large-scale benchmark dedicated to Chinese face video forgery detection, is proposed. The dataset construction process involves several critical stages, including data collection, fake sample generation, and quality assessment. Data collection was conducted in complex environmental settings, such as varying lighting conditions and occlusions, ensuring the diverse and challenging nature of the dataset. Over 190 000 dialogue clips from 2 540 real Chinese identity videos were collected across multiple sources, ensuring rich dataset variability. Twelve advanced AIGC tools that incorporate mainstream and cutting-edge methods based on diffusion, generative adversarial networks(GANs), and hybrid architectures are employed to generate the forged samples. These tools produced 434 727 mixed fake samples, covering seven types of manipulation techniques, such as expression transfer, lip-sync tampering, and audiovisual dissonance. Aiming to ensure data quality and integrity, human perception and automated detection techniques were employed in a dual-layer quality control process. This approach included an assessment framework that considers visual and auditory modalities to create a comprehensive evaluation standard that reflects the challenges encountered by anti-spoofing models in real-world applications. A system-level evaluation benchmark based on deep learning detection models was developed to further enhance the effectiveness of the dataset. This benchmark spans a variety of deepfake detection models, including state-of-the-art (SOTA) and widely used algorithms, to test the robustness and generalization capabilities of these models across different environmental and contextual factors. The goal was to test the adaptability of the detection algorithms to the complexities presented by the CHN-DF dataset.ResultThe CHN-DF dataset, which represents the world’s first Chinese language-based face video anti-spoofing dataset, contains a total of 434 727 samples. This dataset is designed to be diverse and complex, posing substantial challenges for current deepfake detection algorithms. In experimental evaluations using 16 different models, including SOTA and mainstream anti-spoofing models, the accuracy of detecting deepfake faces in this dataset was found to be lower than expected, thereby highlighting task complexity. Specifically, the accuracy for visual and audiovisual combined tests was below 85% and 70%, respectively. These results indicate the increased difficulty in accurately detecting deepfake faces in the CHN-DF dataset, demonstrating that current models are still far from perfect. Further analysis revealed the effectiveness of the evaluation benchmark in highlighting the limitations of existing algorithms. In cross-domain generalization tests, the performance of models fluctuated by an average of 19.6%, indicating substantial variations in model accuracy across different scenarios. This variability emphasizes the need for robust and adaptable algorithms that can handle diverse environmental conditions and complex manipulations in the CHN-DF dataset. Moreover, the evaluation benchmark demonstrated the need for integrating visual and auditory modalities for face video anti-spoofing detection. In numerous cases, models that relied solely on visual features failed to accurately detect fake videos, highlighting the importance of incorporating multimodal features for improved detection accuracy.ConclusionThe CHN-DF dataset and its associated evaluation benchmark effectively fill a critical gap in the field of face video anti-spoofing detection for Chinese language scenarios. Using systematic experimentation, this research clarifies the relationship between dataset characteristics and algorithm performance, elucidating the underlying mechanisms that contribute to the challenges faced by current models. Based on these findings, a roadmap for future improvements in the field is proposed, focusing on key directions such as enhancing model robustness and improving cross-modal generalization capabilities. These insights are crucial for the practical deployment of face video anti-spoofing detection technologies, offering theoretical support and practical guidance for future development. The CHN-DF dataset and evaluation protocol have been made publicly available on GitHub at https://github.com/HengruiLou/CHN-DF, serving as a valuable resource for researchers and practitioners in the field. This dataset serves not only as a technical reference but also as a catalyst for global collaboration in the fight against sophisticated, culturally specific deepfake threats. Future work will extend the dataset to include minority dialects. The dataset is linked at https://doi.org/10.57760/sciencedb.j00240.00067 and https://github.com/HengruiLou/CHN-DF.关键词:deepfake;deepfake face videos;face forgery evaluation benchmark;chinese dataset;multimodal186|199|0更新时间:2026-02-02 - “相关专家在图像和视频合成检测领域取得新进展,提出融合物理与深度学习的检测方法,创新结合光照和阴影一致性分析,有效提升检测精度和适应性,为合成内容检测提供新思路。”

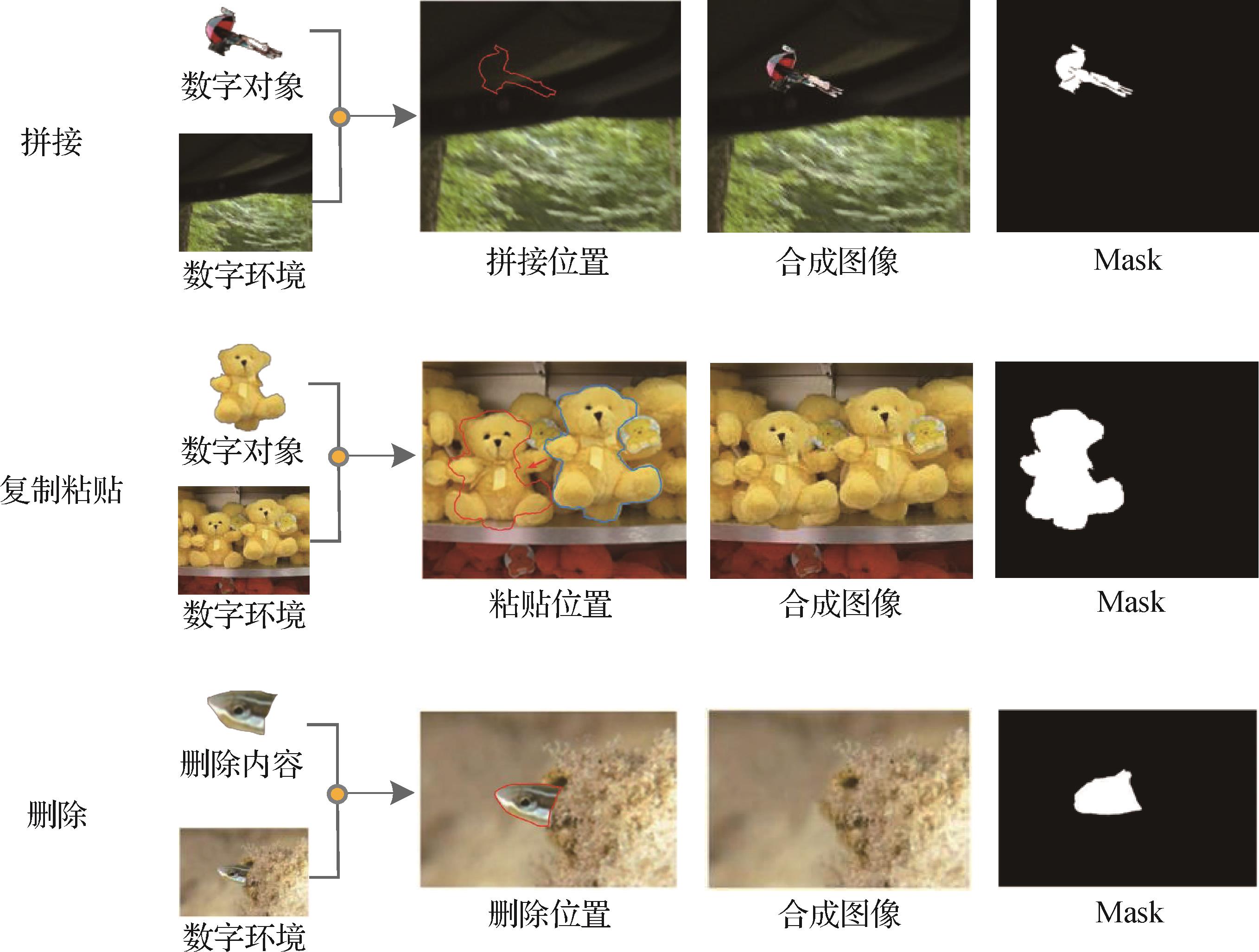

摘要:ObjectiveSynthesis and forgery of media content such as images and videos have become common methods in media post-production. As the technical barriers gradually decrease, the Internet is disseminated with a large amount of synthetic materials. This situation introduces great challenges to the integrity and security of digital media, which affects the authenticity and safety of audiovisual content. In the realm of synthetic media detection, research has greatly increased in recent years. Most commonly used detection methods focus on physical attributes or directly utilize deep neural networks for detection. Image forgery detection methods based on physical attributes can be broadly divided into those that utilize physical features and those that employ deep neural networks. Methods relying on physical features identify visual inconsistencies in images. These methods leverage the fact that synthetic images are often composed of multiple stitched images, which can result in irregularities in lighting, shadows, depth of field, and object distance. Inconsistency detection involves examining inherent physical properties such as lighting and shadows to assess their coherence. Lighting detection methods primarily analyze factors such as light source position, chromatic deviation, object reflectivity, light source color, and local light sources. Shadow detection methods focus on properties such as planar homology, shadow constraints, vanishing point positions for light and shadow, and consistency in geometric relationships. However, due to the diverse and complex environments in which images are acquired, the high precision required by these physical detection methods causes difficulty in relying solely on a single approach for forgery detection in real-world scenarios. In image forgery detection methods based on deep neural networks, some approaches use long short-term memory (LSTM), dual stream parallel network, pyramid architectures, and Transformers to conduct synthesis detection by identifying anomalies in local regions between image blocks while locating synthesized areas. While these methods have demonstrated certain levels of success in experimental settings, the relentless advancement in synthesis technology has resulted in synthetic images that are increasingly indistinguishable from authentic ones. As a result, detection methods relying solely on visual anomalies or signs of synthesis can no longer meet the growing demands for accuracy in detection.MethodWe present a novel approach called the lighting and shadow fusion network (LSFNet) to image forgery detection based on the consistency of lighting and shadows. It combines highly sensitive physical attribute detection with robust deep neural network techniques, which improves the capability to identify even the most meticulously crafted synthetic images. LSFNet comprises key modules, including illumination maps, object and shadow estimation, illumination analysis, and homogeneity analysis. Its goal is to integrate physical detection methods including illumination and shadow with deep neural network techniques for effective detection of well-crafted synthetic images. The method begins by creating an illumination map that labels the object and shadow areas, which results in a boundary illumination map formed by overlapping the illumination map with the object area. This study employs a feature extraction network to derive features from the boundary illumination map and the original image. As a result, a feature fusion network for consistency analysis of the illumination map and light intensity is established to determine the shooting environment of objects within the image. It estimates distances for objects and shadows using cross-ratio for homogeneous vertex estimation to ensure consistent detection of lighting direction in images. Finally, this study utilizes result fusion methods to comprehensively evaluate lighting analysis results and homogeneity analysis results to predict outcomes for synthetic images. Recognizing that existing datasets for synthetic image detection often lack detailed information regarding physical attributes, we introduce a new dataset designed to assist in detecting synthetic images by incorporating essential characteristics such as lighting conditions and object distances crucial for accurately identifying forgery in the complex digital media landscape at present.ResultExperiments conducted on the NIST 16 (National Institute of Standards and Technology Database 16), Coverage, and CASIA (Chinese Academy of Sciences Institute of Automation Database) datasets demonstrate that our method achieves AUC (area under the curve) scores of 94.2%, 93.6%, and 90.3%, respectively, and F1 scores of 80.2%, 79.3%, and 58.1%, outperforming the compared methods. In noise attack experiments, our method exhibits stronger adaptability to size variation, Gaussian blur, Gaussian noise, and JPEG (Joint Photographic Experts Group) compression, with an average AUC of 84.03%.ConclusionThe contributions of this study are summarized as follows: 1) we propose a versatile image forgery detection framework for high-fidelity synthetic images, which is called LSFNet. This framework integrates deep neural networks with physical detection methods and combines boundary lighting map detection and physical attribute consistency analysis. Experimental results show that our method outperforms mainstream techniques in accuracy and demonstrates robustness, which makes it suitable for various synthetic image detection tasks; 2) We present a shadow extraction and consistency analysis method tailored for image forgery detection. This approach, which utilizes Mask R-CNN as the backbone network for shadow prediction, models the relationship between shadow regions and real object areas. By assessing consistency through intersection over union, it significantly enhances the accuracy of image forgery detection; 3) We introduce a synthetic image detection dataset featuring physical attributes such as light source position, color temperature, camera position, object distance, depth of field, and complex backgrounds. The dataset is called the synthetic image physical properties detection dataset. This dataset comprises 22 203 images——15 012 collected images and 7 191 synthetically generated ones——all meticulously crafted by professionals to ensure high realism. The dataset maintains a training-validation-testing split of 7∶2∶1. It includes various synthesis methods such as stitching, copy-pasting, and deletion to effectively support training testing and optimization upgrades for image forgery detection models. The dataset is linked at: https://doi.org/10.57760/sciencedb.j00240.00069.关键词:Synthetic image detection;Illumination detection;shadow detection;detection dataset;artificial intelligence security241|283|0更新时间:2026-02-02

摘要:ObjectiveSynthesis and forgery of media content such as images and videos have become common methods in media post-production. As the technical barriers gradually decrease, the Internet is disseminated with a large amount of synthetic materials. This situation introduces great challenges to the integrity and security of digital media, which affects the authenticity and safety of audiovisual content. In the realm of synthetic media detection, research has greatly increased in recent years. Most commonly used detection methods focus on physical attributes or directly utilize deep neural networks for detection. Image forgery detection methods based on physical attributes can be broadly divided into those that utilize physical features and those that employ deep neural networks. Methods relying on physical features identify visual inconsistencies in images. These methods leverage the fact that synthetic images are often composed of multiple stitched images, which can result in irregularities in lighting, shadows, depth of field, and object distance. Inconsistency detection involves examining inherent physical properties such as lighting and shadows to assess their coherence. Lighting detection methods primarily analyze factors such as light source position, chromatic deviation, object reflectivity, light source color, and local light sources. Shadow detection methods focus on properties such as planar homology, shadow constraints, vanishing point positions for light and shadow, and consistency in geometric relationships. However, due to the diverse and complex environments in which images are acquired, the high precision required by these physical detection methods causes difficulty in relying solely on a single approach for forgery detection in real-world scenarios. In image forgery detection methods based on deep neural networks, some approaches use long short-term memory (LSTM), dual stream parallel network, pyramid architectures, and Transformers to conduct synthesis detection by identifying anomalies in local regions between image blocks while locating synthesized areas. While these methods have demonstrated certain levels of success in experimental settings, the relentless advancement in synthesis technology has resulted in synthetic images that are increasingly indistinguishable from authentic ones. As a result, detection methods relying solely on visual anomalies or signs of synthesis can no longer meet the growing demands for accuracy in detection.MethodWe present a novel approach called the lighting and shadow fusion network (LSFNet) to image forgery detection based on the consistency of lighting and shadows. It combines highly sensitive physical attribute detection with robust deep neural network techniques, which improves the capability to identify even the most meticulously crafted synthetic images. LSFNet comprises key modules, including illumination maps, object and shadow estimation, illumination analysis, and homogeneity analysis. Its goal is to integrate physical detection methods including illumination and shadow with deep neural network techniques for effective detection of well-crafted synthetic images. The method begins by creating an illumination map that labels the object and shadow areas, which results in a boundary illumination map formed by overlapping the illumination map with the object area. This study employs a feature extraction network to derive features from the boundary illumination map and the original image. As a result, a feature fusion network for consistency analysis of the illumination map and light intensity is established to determine the shooting environment of objects within the image. It estimates distances for objects and shadows using cross-ratio for homogeneous vertex estimation to ensure consistent detection of lighting direction in images. Finally, this study utilizes result fusion methods to comprehensively evaluate lighting analysis results and homogeneity analysis results to predict outcomes for synthetic images. Recognizing that existing datasets for synthetic image detection often lack detailed information regarding physical attributes, we introduce a new dataset designed to assist in detecting synthetic images by incorporating essential characteristics such as lighting conditions and object distances crucial for accurately identifying forgery in the complex digital media landscape at present.ResultExperiments conducted on the NIST 16 (National Institute of Standards and Technology Database 16), Coverage, and CASIA (Chinese Academy of Sciences Institute of Automation Database) datasets demonstrate that our method achieves AUC (area under the curve) scores of 94.2%, 93.6%, and 90.3%, respectively, and F1 scores of 80.2%, 79.3%, and 58.1%, outperforming the compared methods. In noise attack experiments, our method exhibits stronger adaptability to size variation, Gaussian blur, Gaussian noise, and JPEG (Joint Photographic Experts Group) compression, with an average AUC of 84.03%.ConclusionThe contributions of this study are summarized as follows: 1) we propose a versatile image forgery detection framework for high-fidelity synthetic images, which is called LSFNet. This framework integrates deep neural networks with physical detection methods and combines boundary lighting map detection and physical attribute consistency analysis. Experimental results show that our method outperforms mainstream techniques in accuracy and demonstrates robustness, which makes it suitable for various synthetic image detection tasks; 2) We present a shadow extraction and consistency analysis method tailored for image forgery detection. This approach, which utilizes Mask R-CNN as the backbone network for shadow prediction, models the relationship between shadow regions and real object areas. By assessing consistency through intersection over union, it significantly enhances the accuracy of image forgery detection; 3) We introduce a synthetic image detection dataset featuring physical attributes such as light source position, color temperature, camera position, object distance, depth of field, and complex backgrounds. The dataset is called the synthetic image physical properties detection dataset. This dataset comprises 22 203 images——15 012 collected images and 7 191 synthetically generated ones——all meticulously crafted by professionals to ensure high realism. The dataset maintains a training-validation-testing split of 7∶2∶1. It includes various synthesis methods such as stitching, copy-pasting, and deletion to effectively support training testing and optimization upgrades for image forgery detection models. The dataset is linked at: https://doi.org/10.57760/sciencedb.j00240.00069.关键词:Synthetic image detection;Illumination detection;shadow detection;detection dataset;artificial intelligence security241|283|0更新时间:2026-02-02 - “相关领域专家构建了基于多域特征融合的多分支网络框架MBMD,为解决现有检测方法泛化能力受限问题提供了新方案。”

摘要:ObjectiveIn recent years, the rapid development of deepfake technology has led to the proliferation of realistic manipulated videos on social media. Even non-expert users can easily manipulate facial content using various applications and open-source tools. Given the sensitivity of personal identity information, false content tends to spread rapidly on the internet, which raises public concerns regarding video authenticity and personal privacy security. Although existing deepfake detection methods perform well in specific scenarios, their performance often degrades considerably when confronted with the ever-changing conditions of real-world scenarios. In other words, detection performance degrades when the forgery technique is unknown, which severely affects the performance of most deepfake detection methods in real-world applications. Furthermore, given that the existing deepfake detection methods based on convolutional neural networks often focus on either global or local spatiotemporal features, they still exhibit complexity in capturing global features and temporal information. The difficulty in capturing comprehensive forgery clues limits the generalization capability of detection methods. To address these issues, a multi-branch network based on multi-domain feature fusion is proposed in this study. This network comprehensively utilizes frequency, spatial, and spatiotemporal domain information to mine more detailed and comprehensive forgery clues.MethodIn the frequency stream, the discrete cosine transform is applied to the images, the low-frequency components that introduce additional noise and information redundancy are removed, and the high-frequency components are retained to capture frequency features of subtle structural changes in the images. In the spatial stream, a spatial feature enhancement block is designed to enhance the shallow features of CNN at multiple scales, and local abnormal regions in the images are captured. In the spatiotemporal stream, the Vision Transformer is used to process the frame sequence, and the global high-level features are obtained to perceive global spatiotemporal information. An information supplement block is developed to combine local features from the spatial stream with global high-level features captured by the Vision Transformer. As a result, the network can comprehensively capture global and local spatiotemporal inconsistencies. Finally, an interactive fusion module is used to enhance and fuse information from the frequency, spatial, and spatiotemporal domains to extract more detailed and comprehensive features for deepfake detection.ResultA systematic comparison was conducted between the proposed method and the state-of-the-art approaches across multiple datasets. In cross-dataset generalization experiments, the advantages of the proposed method were even more pronounced: on the Celeb-DF-v2 dataset, compared to the second-best performing detection model, the proposed method improved ACC by 2.63% and AUC by 3.01%; on the more challenging DFDC dataset, compared to the latest detection model, the proposed method achieved a notable improvement of 4.43% in AUC, highlighting its superior cross-domain adaptability. To further investigate the contribution of the model design to generalization performance, a systematic ablation study was conducted, which thoroughly analyzed the impact of different modules in cross-dataset scenarios. The experimental results clearly validate the effectiveness of each key component and explain the mechanism through which the proposed method enhances generalization capability from a quantitative perspective. This comprehensively confirms the innovativeness and practical utility of the proposed approach.ConclusionThe proposed method has been validated through systematic comparison with state-of-the-art approaches on multiple datasets. It not only achieves advanced performance levels but also demonstrates excellent cross-domain adaptability and interpretability, providing an effective solution for research in related fields.关键词:Deepfake detection;digital image forensics;multi-domain feature fusion;multi-branch;local-global role200|251|0更新时间:2026-02-02

摘要:ObjectiveIn recent years, the rapid development of deepfake technology has led to the proliferation of realistic manipulated videos on social media. Even non-expert users can easily manipulate facial content using various applications and open-source tools. Given the sensitivity of personal identity information, false content tends to spread rapidly on the internet, which raises public concerns regarding video authenticity and personal privacy security. Although existing deepfake detection methods perform well in specific scenarios, their performance often degrades considerably when confronted with the ever-changing conditions of real-world scenarios. In other words, detection performance degrades when the forgery technique is unknown, which severely affects the performance of most deepfake detection methods in real-world applications. Furthermore, given that the existing deepfake detection methods based on convolutional neural networks often focus on either global or local spatiotemporal features, they still exhibit complexity in capturing global features and temporal information. The difficulty in capturing comprehensive forgery clues limits the generalization capability of detection methods. To address these issues, a multi-branch network based on multi-domain feature fusion is proposed in this study. This network comprehensively utilizes frequency, spatial, and spatiotemporal domain information to mine more detailed and comprehensive forgery clues.MethodIn the frequency stream, the discrete cosine transform is applied to the images, the low-frequency components that introduce additional noise and information redundancy are removed, and the high-frequency components are retained to capture frequency features of subtle structural changes in the images. In the spatial stream, a spatial feature enhancement block is designed to enhance the shallow features of CNN at multiple scales, and local abnormal regions in the images are captured. In the spatiotemporal stream, the Vision Transformer is used to process the frame sequence, and the global high-level features are obtained to perceive global spatiotemporal information. An information supplement block is developed to combine local features from the spatial stream with global high-level features captured by the Vision Transformer. As a result, the network can comprehensively capture global and local spatiotemporal inconsistencies. Finally, an interactive fusion module is used to enhance and fuse information from the frequency, spatial, and spatiotemporal domains to extract more detailed and comprehensive features for deepfake detection.ResultA systematic comparison was conducted between the proposed method and the state-of-the-art approaches across multiple datasets. In cross-dataset generalization experiments, the advantages of the proposed method were even more pronounced: on the Celeb-DF-v2 dataset, compared to the second-best performing detection model, the proposed method improved ACC by 2.63% and AUC by 3.01%; on the more challenging DFDC dataset, compared to the latest detection model, the proposed method achieved a notable improvement of 4.43% in AUC, highlighting its superior cross-domain adaptability. To further investigate the contribution of the model design to generalization performance, a systematic ablation study was conducted, which thoroughly analyzed the impact of different modules in cross-dataset scenarios. The experimental results clearly validate the effectiveness of each key component and explain the mechanism through which the proposed method enhances generalization capability from a quantitative perspective. This comprehensively confirms the innovativeness and practical utility of the proposed approach.ConclusionThe proposed method has been validated through systematic comparison with state-of-the-art approaches on multiple datasets. It not only achieves advanced performance levels but also demonstrates excellent cross-domain adaptability and interpretability, providing an effective solution for research in related fields.关键词:Deepfake detection;digital image forensics;multi-domain feature fusion;multi-branch;local-global role200|251|0更新时间:2026-02-02 - “虹膜呈现攻击检测领域迎来新突破,相关专家构建了基于空间域与频域特征融合的虹膜PAD模型,为提升虹膜识别系统在跨域场景下的安全性和合成虹膜检测能力提供了有效方案。”